Research Article: 2019 Vol: 23 Issue: 1

Culture in Interdependent Critical Infrastructure

Kelly Calder Muzyczka, University of Florida

Kelly Chapman, University of Florida

Christopher McCarty, University of Florida

Abstract

This paper examines the presence and role of culture in the transportation, power, and communication industry. These are tightly connected infrastructure systems and therefore when one system fails, it often cascades to the others. These cascading risks are partly due to the number of components, internal and external, necessary for a fully functioning system. People interact with these systems daily, both those who work with them and everyday user. Through mixed methods, this study tests whether a cohesive work culture is present in these systems. With free-list interviews and cultural consensus analysis the authors identify system halting disturbances both within each system and throughout all industries. How much participants agree on the frequency and importance of these problems measures the industry culture. The authors found agreement within each industryâÂÂs sample on major system failures. Amount of time an individual has worked the industry positively correlates to the frequency of training. Further application of this research is how industry culture affects information dissemination, decision-making, and protocol adherence.

Keywords

Human Vulnerability, Cultural Consensus Analysis, Culture, Mixed Methods, Interdependent Infrastructure.

Introduction

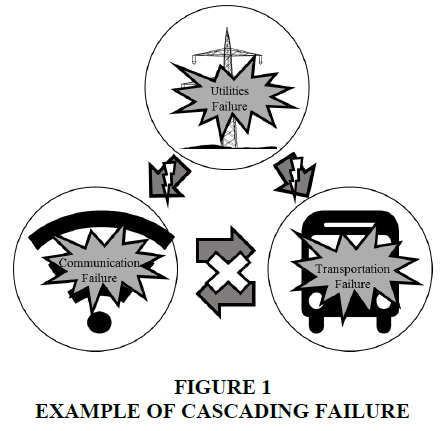

The 2003 blackout in northeastern United States is among many examples of cascading failures within the transportation, communication, and power industries (Andersson et al., 2005; Grinberg et al., 2017). Because the power system is far reaching, causes of failures are as well. In this example, improperly trimmed trees near power lines were the primary trigger of this blackout. Models estimating power loads and distribution did not predict the events leading up to this blackout nor did they estimate the resulting cascading impact. Although this blackout was not predicted, the causes underlying this failure are long standing issues within these industries. Underline issues in the power industry leading to this and other failures include two human resource related issues, lack of adherence to industry policy and inadequate management (Force & Abraham, 2004). Resources depending on this power load such as public transportation, purchasing devices (credit card readers, ATMs), landline telephones, and Internet routers were all temporarily out of service. This power outage and the resulting cascade completely disconnected many components of an interdependent system shown in Figure 1.

Modern power systems incorporate advanced technology to manage power loads and warn workers about potential failures. Automation reduces the risk of system failures, yet manual overrides are also required in anticipation of unexpected issues and failures (Zheng et al., 2004). Though necessary, manual overrides introduce human errors that can cascade through interrelated systems.

The growing complexity and scale of interdependent critical infrastructure contributes to the rise and increased severity of cascading failures (Gao et al., 2011; Little, 2003). Humans’ effect on these problems is best understood by viewing people and the infrastructure they interact with as two interdependent systems. Both the infrastructure and the human systems have multiple connected components that must communicate with each. Failure to do so or miscommunication can cause errors. Minimizing error begins by understanding the root of the error, which can stem from either the individuals working within the systems or the systems themselves. How humans interact with these systems can be hard to predict, introducing system vulnerabilities (Helbing, 2013). Models and taxonomic systems exist to better predict, understand, and analyze these human errors (Embrey, 2005; Gordon et al., 2005). A classic example is Rasmussen’s classification system for reporting and analyzing human error (Rasmussen & Vicente, 1989). This taxonomic approach categorizes different types of human errors, the systems involved, and the consequences. A modern update to this is the Human Factors Investigation Tool (HFIT) that classifies accidents into different categories, records the causes of the error, how it was fixed, and related thought processes (Gordon et al., 2005). A major goal of the HFIT is to learn from mistakes in the past and create safer, better infrastructure systems through the people working on them. Today, infrastructure research incorporates modern computer modeling technology and predictive algorithms (Zhai et al., 2017; Zio 2016). These new technologies incorporate human interactions with modern large-scale infrastructure and result in more accurate predictions. Examples include models that incorporate hierarchal work structures to create accident “profiles”, incorporating past failure data into future predictions, and using accident categories and subcategories to accurately assess risks involving humans (Embrey, 2005). Recently there has been a push towards designing holistic and integrative research to tackle these large-scale problems (Laugé et al., 2015).

Because modeling human behavior is difficult and ever changing, an idea of how industry culture affects these decisions, and what demographics, if any are correlated to a more cohesive culture is important. The culture of a workplace shapes the environment and worker decisions. It can increase productive behaviors and habits or provide distractions and protocol-breaking shortcuts (Braun et al., 2015). To better understand how this culture effects decision-making in these critical infrastructures, the first step is identifying if there is a culture and how industry members define it.

Culture is widely defined as a set of values, beliefs, or customs that are shared, learned, and transmitted generationally. Within the power, transportation, and communication industries customs, procedures, and protocols exist that may be shared from member to member in both formal and informal ways. Knowing how industry members react to system vulnerabilities becomes exceptionally critical when they are responding to an infrastructural failure. It becomes even more critical when their actions contribute to an infrastructural failure.

Decision-making and groupthink have been studied for decades (Janis, 1971; Braun et al., 2015; Esser 1998). Various outcomes, people, and protocols influence each decision made, from a road closure, to a government shutdown and can be influenced by the surrounding social group. Understanding what factors increase cohesion within a work culture is helpful to enact positive change. Fitzgerald suggests that cultural change must start in management (Fitzgerald, 1988). Studies have shown that ongoing training and group cohesion are two factors that increase productivity (Council, 2015). It is argued there are ways to manage and control the culture employees are embedded within (Fitzgerald, 1988). Implementing standard protocols is one example companies frequently use. Employees can reference these protocols when they face unfamiliar or emergency situations. Groupthink is when an individual is influenced more by a group than by their own opinions or beliefs. Often, this is seen when a group supports and encourages thoughts and behaviors that may be detrimental to an outcome (Janis, 1971; Esser, 1998). There are mixed views on whether groupthink aids in more positive decision-making and less vulnerabilities (Branson et al., 2010). Regardless, serious repercussions exist from these decisions made within work environments and it is important to understand the relationship between group culture and associated risk factors.

The decisions people make in these industries are not isolated events, they happen within an industry or organization and amongst coworkers (Van Laere, 2018). Understanding what agreement, if any, these individuals have regarding infrastructure vulnerabilities aids understanding potential human-related vulnerabilities within the industry. We hypothesize that frequency of training and time in the industry positively correlate with competency scores. Secondly, we hypothesize that within the sample, all industries have a cohesive culture. The significance and meaning of this cohesive culture is explored further in later research.

Methods

A mixed-methods approach provides many different data points and perspectives on these research questions (Small, 2011). Two different survey methods were used in this research, free-lists and cultural consensus analysis. Both are used to identify common errors in each industry (transportation, power, and communication), and if people agree on these errors. A free list survey begins with a purposefully open-ended question and allows respondents to provide as many responses as they feel necessary and relevant (Weller & Romney, 1988). The cultural consensus survey provides participants with a list of different industry-specific errors that could lead to a failure and asks them to rank each one from likeliest to least likely to happen. In addition, the cultural consensus survey collected demographic data: age, sex, education level, frequency of training, and length of time in industry. The cultural consensus survey asked participants to select from a list of potential work inhibiting factors, such as being tired or multitasking. Lastly, participants were prompted to provide their own definition of an industry failure.

These methods contribute to the following research goals: a basic knowledge of each industry, an understanding of what operation halting errors often arise in these different areas, how industry member perceive their industry, how they solve problems, and if there is agreement among them. How people in these industries function is important because it is within these work structures that problems not only arise but are also resolved (Buldyrev et al., 2010).

Free List Surveys

Free listing is an ideal method for collecting preliminary data on lesser known topics or areas. The flexibility and survey style encourage participants to list more information than they might with a more structure and rigid questionnaire. Free listing provided a baseline list of errors in these industries, critical for creating accurate follow-up surveys. This type of survey may ask participants many different free list questions; however our survey consisted only of the question below.

“What could go VERY wrong with the systems of the [fill in the name of the system the respondent works in (power, transportation, or communication)] industry and how would people in your line of work respond?”

This question is intentionally broad to reduce bias in respondent answers. Respondents were encouraged to list as many items as possible. Individuals were recruited to take this survey through visits to field sites, such as truck stops, electrician and cable company offices, and IT departments. Additionally, this research was advertised through flyers and on industry specific message boards. Individuals could take the survey if they were current or former workers in the transportation, communication, or power industries. Most of these surveys were completed faceto- face (n=20), however due to scheduling conflicts, one was conducted via email, and another over the phone. The face-to-face and phone interviews were conducted with pen and paper and later transcribed.

Cultural Consensus

The free list survey provided the basis for the cultural consensus survey questions. This method is designed to identify if a cohesive culture is present among a group of participants (Weller & Romney, 1988). This can be accomplished using two different approaches, the formal or the informal. Multiple-choice, open-ended, or true/false questions are all types of questions used in the formal model. Rank order, ratio, or interval questions are all types of questions used in the informal model. This research uses the informal model with rank-order questions (Weller, 2007). These surveys are distributed among a group of people and participant results are compared to each other and analyzed for similarities (Weller, 2007). This type of analysis does not cause consensus or define what the culture of a sample is, rather it reports on its’ presence or absence. Consensus is determined by the eigen ratio of the first and second factors. If the variation of responses is solved in a single factor solution, the eigen ratio will be 3:1 or greater, and this lack of variation indicates one culture is present (Weller, 2007).

This survey was created with Qualtrics and conducted entirely online. The sample requirements remain the same from the free list survey. Individuals were recruited to participate through respondent driven sampling, flyers, online forum posts, and personal contacts. A total of 75 surveys were completed, of which, 55 were fully completed and used for this analysis. Due to the small but number of negative competency scores, these were viewed as anomalous responses and 3 individuals were removed to normalize the data, resulting in a final sample size of 52. Thirty-two transportation industry members, 10 individuals from the communication/IT industry and 10 individuals in the power industry. The most frequent and salient items from the free list were included. Transportation industry members ranked 14 items, communication members ranked 13 items, and individuals from the power industry ranked 16 items. Table 1 above includes each item ranked categorized by industry. Participants were asked to reference their personal experience and rank items from most likely to least likely to occur. These rankings are entirely subjective and depend solely on individual opinions and experiences. This was emphasized to all participants.

| Table 1 Complete List of Items Included in the Cultural Consensus Survey | ||

| Transportation | Power | Communication |

| Over regulation | Technical difficulties | Stopping communication |

| Scheduling delays | Systems down | Electromagnetic pulse |

| Lack of parking | Cascading disasters | Weather |

| Government intervention/overregulation | Explosions | Terrorism |

| Lack of funding | Lack of public education | Systems unknowingly compromised |

| Technical difficulties | Internet going down | Infrastructure failures |

| Lack of education among the public | VoIP telephony going down | Inexperienced human interactions with equipment |

| Lack of education among fellow transportation industry members | Terrorist activities | Malicious activities by internal personnel |

| Inconsiderate drivers | Weather | Malicious activities by external personnel |

| Long hours resulting in sleep deprivation | Natural disasters | Routers attacked |

| Major collision/accidents | Power surge | Security at risk |

| Communication servers down (radios, control towers, etc.) | Human error | Natural disaster |

| Government shutdown | Government shutdown | Power disconnection |

| Weather (natural disasters) | Server overload | |

| Human error | ||

| Government shutdown | ||

This analysis yields a few different measures. A general cultural consensus score is given to each industry. This score is the eigen ratio of the first and second factors and determines if a culture is present or not. This score is based on the variety of participant responses, resting on the theory that if individuals answer similarly, they are informed by the same culture. Individuals are also given a score. This is called a cultural competency score and it has nothing to do with individual intelligence. This score considers how a participant responds compared with every other participant and the survey answer key. Higher competency scores are given to participants who rank items similarly to the rest of the sample. Because this analysis measures the presence of culture, competency scores indicate how representative an individual is of that culture. The answer key associated with informal cultural consensus surveys is weighted by participant competency scores and estimates the “correct” response based on all survey results. There are no preconceived right or wrong ways to rank these items, and the key emerges from the data. It provides details on how each culture responds to each question, and where each item is ranked on average. With these results, a participant and item similarity matrix is also constructed using Pearson’s correlation measure (Weller, 2007).

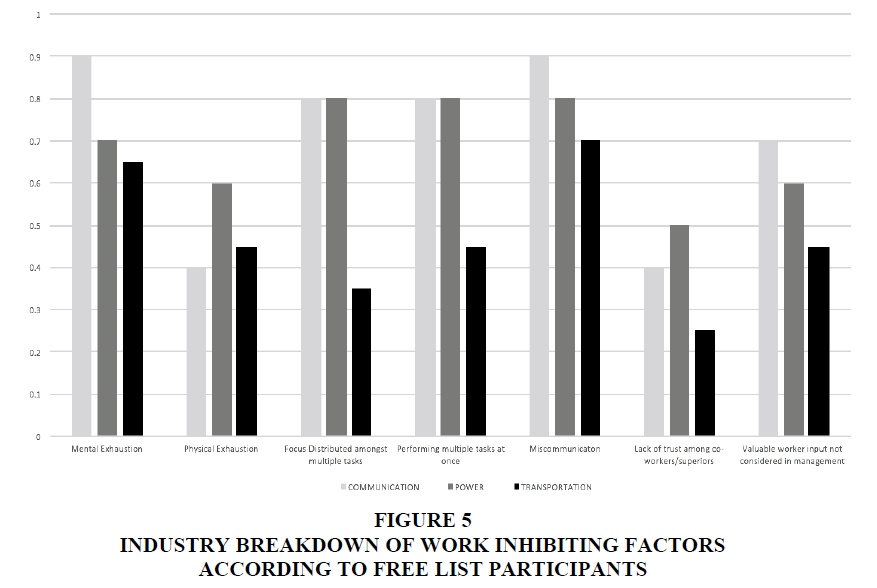

In addition to this cultural consensus data, the survey also asked participants for their age, gender, education level, length of time in industry, and how frequently they receive training. Using multiple regression, the relationship of these attribute data on the dependent variable, competency scores was calculated. Participants were also asked to select from a list of potential human related causes of error that they have experienced or observed. Items include: mental exhaustion, physical exhaustion, focus distributed amongst multiple tasks, performing multiple tasks at once, miscommunication, lack of trust among co-workers/superiors, and valuable worker input not considered in management. These all come from human error literature as well as comments from the free list survey. Finally, participants were given the option to define a failure in their industry. This was an open-ended and optional question, similarities within and between industries in these definitions is analyzed.

Analysis

Free list data was analyzed using Free List Analysis Macro for Excel (FLAME) (Pennec et al., 2012). Frequency and saliency are measured for each industry. The Smith index is used to measure item saliency (Smith & Borgatti, 1997; Sutrop, 2001). The Smith index considers the average rank of an item across all lists in the sample, the length of the lists, and the frequency in which each term is named (Smith & Borgatti 1997). Only the most frequent and salient items were included in the cultural consensus survey.

Cultural consensus analysis was done using UCINET and multiple regression analysis was done in R (Borgatti et al., 2002). Additionally, using excel, work inhibiting factors were analyzed for frequency and compared between industries. The final question asking participants to define a failure in their industry was coded for specific themes and key word frequency was used to analyze and compare definitions.

Results

Free List Surveys

The results of the free list surveys were analyzed within each industry and those results were then compared across industries. The top three most frequently listed items and their corresponding Smith saliency index number are compiled in Table 2. The smith index ranges from 0-1, with 1 the most salient and 0 meaning the item was not listed at all.

| Table 2 Top 3 Free List Responses by Frequency | |||

| Item Name | Frequency | Smith Index | |

| Transportation | Over regulation | 0.67 | 0.518 |

| Scheduling delays | 0.58 | 0.316 | |

| Lack of parking | 0.42 | 0.239 | |

| Power | Technical difficulties | 0.67 | 0.433 |

| Systems down | 0.67 | 0.178 | |

| Cascading failures | 0.33 | 0.327 | |

| Communication | Stopping communication | 0.57 | 0.327 |

| EMP (Electromagnetic Pulse) | 0.29 | 0.133 | |

| Weather | 0.29 | 0.286 | |

The category “failures related to outside interference” was created to represent common themes across industries. Although type of outside interference differed by industry, items in this category were frequently listed by participants. In the transportation industry, outside interference items included: government over-regulation, and lack of education of the public. Seven out of 12 industry members mention either problem. For the communications industry, outside interference compromising systems is listed by 6 out of 7 respondents. The power industry reported the lowest risk of outside interference, however of the three respondents, one listed untrained public contact with power machinery as a potential risk for failure, while another listed terrorist attacks.

Another theme common throughout all industries was technical difficulties. This term was listed explicitly by members of the transportation and power industry. However, the small sample size (3) for the power industry should be noted and while this provides us an important knowledge base, it warrants further investigation. In the communication industry, stopping communications (a broad term for any sort of break in communications, which may have multiple causes) was the overall most frequent response among communication industry members. Respondents from the power industry also reported power systems going down, and the Internet crashing as common infrastructure related failures.

Cultural Consensus

Table 3 is an overview of sample characteristics and consensus by industry. All three industries returned cultural consensus, indicating that each sample was drawn from a single culture. These consensus data are visualized with multi-dimensional scaling. This type of visualization represents the data in two dimensions (Kruskal & Wish, 1978; Borg & Groenen, 1997). Items that are highly correlated to one another are plotted closer to each and vice versa. The two dimensions along the x and y axis are naturally created due to multi-dimensional scaling placing similar items nearer and dissimilar items further.

| Table 3 Cultural Consensus Sample Characteristics and Eigen Ratios by Industry | ||||||

| Total number interviewed | Eigen ratio | % Male | Mean age range | Mean education level | Most reported training frequency | |

| Transportation | 32 | 3.031 | 81.08 | 45-54 | Some college or associate’s | Frequently |

| Power | 10 | 4.512 | 100 | 45-54 | Bachelor’s degree | Frequently |

| Communication | 10 | 3.042 | 75 | 35-44 | Bachelor’s degree | Frequently |

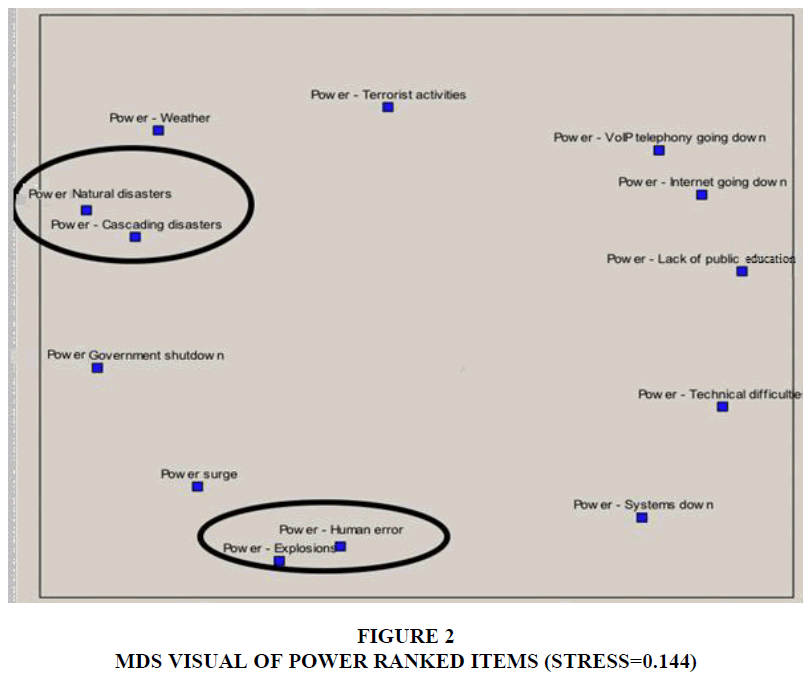

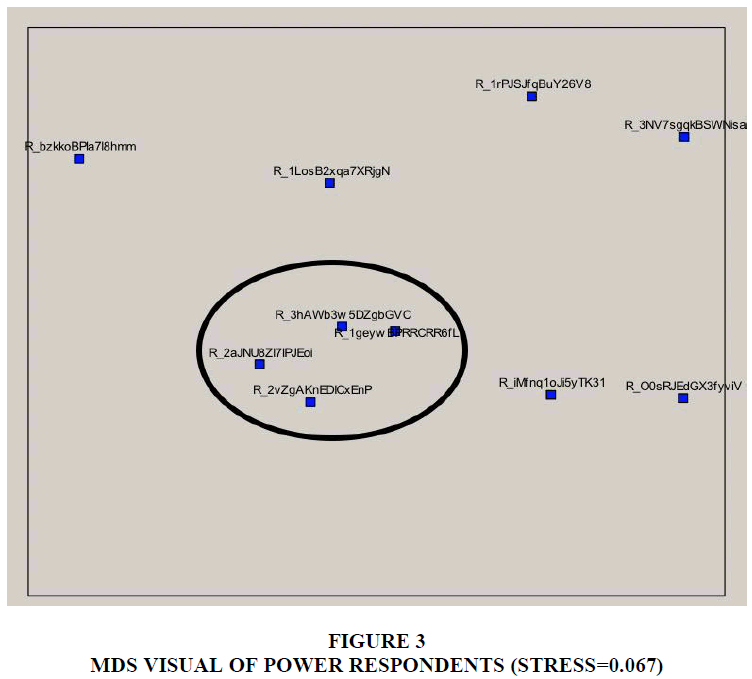

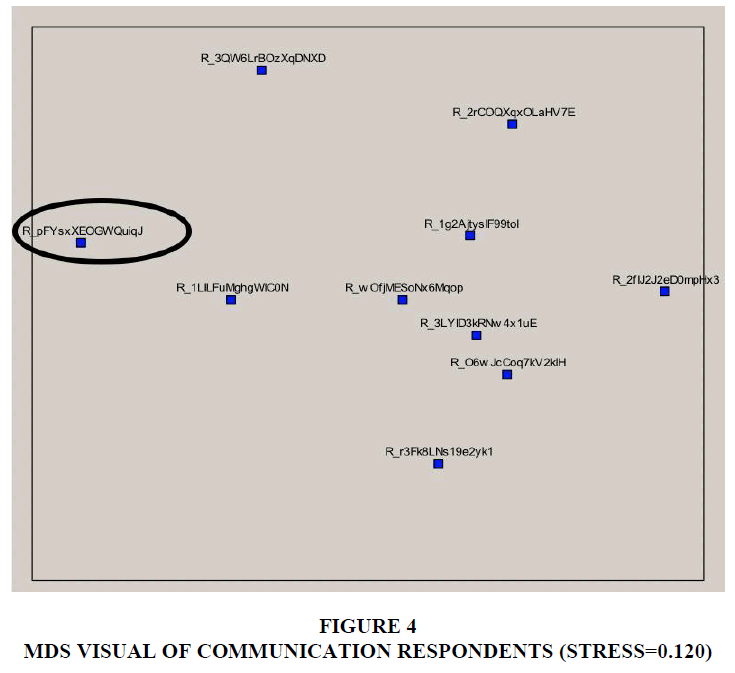

Figures 2-4 below are Multi-Dimensional Scaling visualizations (MDS) of both the respondents and items’ Pearson coefficient matrix for each industry. The item MDS visualizes each item on the survey by how similarly it was ranked in relation to every other item. The respondent MDS visualizes how similar the participants responded. The stress score of MDS visualizations calculates how good a match the data is for this type of visualization. A stress level of 0.2 or less indicates the visualization accurately represents the data. All MDS graphs had less than 0.2 stress levels except the communication item MDS and the transportation respondent MDS. This difference is apparent through the lack of structure in the MDS graphs with higher stress levels.

Overall, the power industry had the highest eigenvalue ratio (4.512), making it the most cohesive culture. The three highest ranked items in the power industry were “technical difficulties”, “human error”, and “weather”. Participants often ranked “natural disasters” and “cascading disasters” in similar positions. “Human error” and “explosions” were also ranked closely to one another. This clustering is seen in Figure 2. Figure 3 demonstrates how the four respondents with the highest competency scores are clustered together, showing similarity in rankings among these four participants. Three out of four of these individuals reported receiving training frequently.

The communication industry had the second highest consensus (3.042). The top three ranked items in the communication industry were “communication failure or stoppage”, “human error”, and “bad weather”. This list is almost identical to the power industry, both reporting weather and human error in their top three ranked items. The structure of the MDS for both respondents and items is closely resembles the power industry. The respondents clustered together in Figure 4 are again those with the highest competency scores. All three of these individuals receive training frequently. The individual who received a negative competency score is far to the left, separated graphically due to dissimilarity.

The transportation industry had the lowest consensus (3.031). Prior to removing the three participants with negative competency scores, the eigen ratio was 2.917, narrowly missing the 3:1 suggested ratio. The top three ranked items were “overregulating the industry” (broadly), “scheduling delays”, and “the government intervening and overregulating the industry”. The MDS visualizations for the transportation industry respondents had the highest level of stress and the item MDS had the second highest level of stress (second to the communication industry item MDS).

Multiple regressions were used to test the interactions between demographic variables and competency scores. An individual with a higher competency score is more representative of the culture than someone with a lower score. Therefore, variables with a significant relationship to competency scores provide insight on the demographics associated with the most representative sample. Of the four multiple regression models, one for each industry and one combining all three industries, education level was the only variable to have significant interaction with competency scores. The more education an individual had, the lower their competency scores. Within the communication industry, this interaction had a p-value of 0.023, and in all industries combined, the p-value was less significant at 0.088.

Figure 5 highlights each industry’s’ views on work inhibiting factors. The employees in these industries all work with critical infrastructure, but in different environments and contexts. Transportation industry members selected fewer performance inhibiting factors than any other industry. On average, miscommunication and mental exhaustion were the two most selected factors across industries. Some items were more industry specific. Physical exhaustion was selected by only 27% of communication industry members, 46% of the transportation industry members, and 63% of the power industry members.

Human error is an inevitable component of infrastructure failures. Understanding the different environments and cultures individuals work in help explain potential sources of error as well as solutions. When people are involved in system failures or solutions, their influences and decision-making processes are important to better understand these systems and their outcomes. There are many different work environments, some that facilitate the introduction of new protocol and knowledge and others that do not (Branson et al., 2010). This research incorporates past research on human involvement in critical infrastructure while introducing additional methods to better understand this relationship.

Discussion

Understanding the role work place culture has on employee decision-making and infrastructure failures can increase the accuracy of both established and new predictive models. Modeling these systems requires the ability to incorporate many components and potential outcomes based on both real-world data and theoretical frameworks. These models contribute to better understanding the interdependencies of critical infrastructure. Lu et al. (2016) argue that incorporating the human element of these systems is best done by treating the people as an interdependent system themselves. While they specifically refer to the communities impacted by infrastructure failures, this paper further this approach by applying it to the individuals working within the infrastructure systems.

Understanding how workplace culture impacts the resiliency and/or vulnerabilities of the transportation, power, and communication industries requires incorporating existing literature on workplace culture, decision-making and interdependent infrastructure failures (Andersson et al., 2005; Hines et al., 2009; Embrey, 2004; Rasmussen & Vicente, 1989). Our research continues the growing dialogue between these fields of study.

This research furthers concepts explored through the Human Factors Investigation Tool (HFIT) by examining specific workplace cultures and the widely (and rarely) held beliefs among those who work in these industries (Gordon et al., 2005). The HFIT evaluates 4 areas of human-infrastructure interaction in regard to infrastructure failures: (a) the action errors occurring immediately prior to the incident, (b) error recovery mechanisms, in the case of near misses, (c) the thought processes which lead to the action error and (d) the underlying causes (Gordon et al., 2005). Through asking participants about potential sources of failure within their industry, we gain a better understanding of the role individuals in these industries have in both failure prevention and recovery. Additionally, we test if there is consensus among industry members and how that contributes to their roles in critical infrastructure systems.

Anthropological methods such as cultural consensus analysis provide a deeper understanding of how the individuals in these industries communicate, and how culture plays a role in these communications. This type of information is important to include in predictive models as the individuals are an important resource for both system recovery and a potential source for system failure. The work inhibiting factors presented in this research add to previous research on potential sources of performance influencing factors that may increase the likelihood of human error (Embrey, 2004). Additionally, this study focuses specifically on the presence or lack of culture among these industries and how this culture may be an important factor to include in future predictive models and critical infrastructure research.

Conclusion

The cultural consensus surveys provided insight into the presence of culture within these industries. All three industries returned an eigen ratio indicating that among the sampled individuals, there is one culture present. While this research shows that there is culture present among these industries, further investigation into work place dynamics, including a larger sample size, will positively contribute to the conclusions drawn in this paper. The authors also further explored demographic data in relation to these cultural consensus scores.

The negative relationship between education level and competency scores may be meaningful in two ways. Separating participants in two groups based on education level may result in a higher eigen ratio. This two-culture solution could be the result of education-based differences in professional positions. Individuals with a Master’s or PhD likely fill different roles than those with different education backgrounds. Individuals may view industry problems differently based on their job and role in the infrastructure system. Those in managerial positions do not have the same hands-on daily experience as individuals working directly with these systems every day. The communication industry selected “valuable worker input not considered in management” and “miscommunication” as work inhibiting factors more often than power or transportation and was also the only industry with a significant interaction between education level and competency score. This begs further investigation into the hierarchy and structure of these industries. How are workers being managed and how might this contribute to infrastructure failures? Additionally, this may suggest that professional training and work experience are more influential in shaping these industry cultures than formal education. Increasing sample size, especially for the power industry is an additional strategy for further research on this topic.

Though we found each industry has a cohesive culture, further research is needed to understand the significance and implications of a single culture solution in these industries. Social network analysis would uncover additional work structures, information flow, and decision-making influences. Future research will involve personal network interviews with industry members to better understand these influences and how they impact decisions. Testing for the presence of a single culture was the first step, understanding the ways culture impacts critical infrastructure failures is the next.

Acknowledgement

This work was funded by the National Science Foundation under Grant No. EFRI-1441231.

References

- Andersson, G., Donalek, P., Farmer, R., Hatziargyriou, N., Kamwa, I., Kundur, P., Martins, N., Paserba, J., Pourbeik, P., Sanchez-Gasca, J., & Schulz, R. (2005). Causes of the 2003 major grid blackouts in North America and Europe, and recommended means to improve system dynamic performance. IEEE Transactions on Power Systems, 20(4), 1922-1928.

- Borg, I., Groenen, P.J., & Mair, P. (2012). Applied multidimensional scaling. Springer Science & Business Media.

- Branson, L., Steele, N.L., & Sung, C.H. (2010). When two heads are worse than one: Impact of group style and information type on performance evaluation. Journal of Business and Behavioral Sciences, 22(1), 75-84.

- Braun, S., Frey, D., Brodbeck, F.C., & Hentschel, T. (2015). Group processes in organizations. Elsevier.

- Buldyrev, S.V., Parshani, R., Paul, G., Stanley, H.E., & Havlin, S. (2010). Catastrophic cascade of failures in interdependent networks. Nature, 464(7291), 1025.

- Embrey, D. (2005). Understanding human behaviour and error. Human Reliability Associates, 1(2005), 1-10.

- Esser, J.K. (1998). Alive and well after 25 years: A review of groupthink research. Organizational Behavior and Human Decision Processes, 73(2-3), 116-141.

- Fitzgerald, T.H. (1988). Can change in organizational culture really be managed? Organizational Dynamics, 17(2), 5-15.

- Force, U.C., & Abraham, S. (2004). U.S.-Canada power system outage task force.

- Gao, J., Buldyrev, S.V., Stanley, H.E., & Havlin, S. (2012). Networks formed from interdependent networks. Nature Physics, 8(1), 40.

- Gordon, R., Flin, R., & Mearns, K. (2005). Designing and evaluating a human factors investigation tool (HFIT) for accident analysis. Safety Science, 43(3), 147-171.

- Grinberg, E., Ostrower, J., Park, M., & Zdanowicz, C. 2017. Atlanta’s Hartsfield-Jackson Airport restores power after crippling outage. https://edition.cnn.com/2017/12/17/us/atlanta-airport-power-outage/index.html

- Helbing, D. (2013). Globally networked risks and how to respond. Nature, 497(7447), 51-59.

- Janis, I.L. (1971). Groupthink. Psychology Today, 5(6), 43-46.

- Korkali, M., Veneman, J.G., Tivnan, B.F., Bagrow, J.P., & Hines, P.D. (2017). Reducing cascading failure risk by increasing infrastructure network interdependence. Scientific Reports, 7 (1), 1-14.

- Kruskal, J.B., & Wish, M. (1978). Multidimensional scaling. Beverly Hills, CA.

- Laugé, A., Hernantes, J., & Sarriegi, J.M. (2015). Critical infrastructure dependencies: A holistic, dynamic and quantitative approach. International Journal of Critical Infrastructure Protection, 8, 16-23.

- Little, R.G. (2003). Toward more robust infrastructure: observations on improving the resilience and reliability of critical systems. Proceedings of the 36th Annual Hawaii International Conference on System Sciences.

- Lu, L., Wang, X., Wang, Z., Ouyang, Y., Roningen, J., Tweddale, S., Edwards, P., & Myers, N. (2016). Assessing socioeconomic impacts of cascading infrastructure disruptions in a dynamic human-infrastructure network. US Army Engineer Research and Development Center (ERDC) Champaign, United States.

- National Research Council. (2015). Enhancing the effectiveness of team science. National Academies Press.

- Pennec, F., Wencelius, J., Garine, E., Raimond, C., & Bohbot, H.F.L.A.M.E. (2012). FLAME v1. 0: Free-list analysis under Microsoft Excel. Paris: CNRS.

- Rasmussen, J., & Vicente, K.J. (1989). Coping with human errors through system design: Implications for ecological interface design. International Journal of Man-Machine Studies, 31(5), 517-534.

- Small, M.L. (2011). How to conduct a mixed methods study: Recent trends in a rapidly growing literature. Annual Review of Sociology, 37, 57-86.

- Smith, J.J., & Borgatti, S.P. (1997). Salience counts-and so does accuracy: Correcting and updating a measure for free-list-item salience. Journal of Linguistic Anthropology, 7, 208-209.

- Sutrop, U. (2001). List task and a cognitive salience index. Field Methods, 13(3), 263-276.

- Van Laere, J., Berggren, P., Ibrahim, O., Larsson, A., & Kallin, S. (2018). A simulation-game to explore collective critical infrastructure resilience. Proceedings of 28th European Safety and Reliability Conference, ESREL in Trondheim, Norway, 1305-1312.

- Weller, S.C. (2007). Cultural consensus theory: Applications and frequently asked questions. Field Methods, 19(4), 339-368.

- Weller, S.C., & Romney, A.K. (1988). Systematic data collection. Sage publications.

- Zhai, C., Zhang, H., Xiao, G., & Pan, T. C. (2017). Modeling and identification of worst-case cascading failures in power systems.

- Zheng, X.Z., Tsuchiya, K., Sawaragi, T., Osuka, K., Tsujita, K., Horiguchi, Y., & Aoi, S. (2004). Development of human-machine interface in disaster-purposed search robot systems that serve as surrogates for human. Proceedings of IEEE International Conference on Robotics and Automation, 225-230.

- Zio, E. (2016). Challenges in the vulnerability and risk analysis of critical infrastructures. Reliability Engineering & System Safety, 152, 137-150.