Research Article: 2026 Vol: 30 Issue: 2

The Anatomy of Data Network Effects: Identifying key enablers through Systematic Review and Delphi-AHP Prioritisation

Amit Sareen, IIM Sambalpur

Amit Ss Jain, IIM Sambalpur

Citation Information: Sareen., A & Jain., A.S. (2026) The anatomy of data network effects: identifying key enablers through systematic review and delphi–ahp prioritisation. Academy of Marketing Studies Journal, 30(2), 1-33.

Abstract

Digital platforms have transformed the definition of value creation by transforming user interaction into data feedback loops that are self-reinforcing, otherwise called Data Network Effects (DNEs). Although the concept has gained increasing academic interest, the studies concerning the mechanisms and conditions that empower DNEs have been disjointed. A total of fifty-five high-quality publications are incorporated in this study in order to bring together the enablers that support the formation and scalability of DNEs in AI-driven platform ecosystems. A two-stage Desk/Documentary Delphi and Analytic Hierarchy Process (AHP) analysis, consequent to a systematic literature review based on the Theory-Context-Methodology (TCM) and the Antecedents-Decisions-Outcomes (ADO) frameworks, was carried out. The Delphi stage distilled consensus on six key enablers data stewardship and quality, algorithmic capability, governance and complementor strategy, feedback tightness and latency, user trust and overload, and leadership orientation while the AHP stage prioritised their relative importance. Results show that high data quality, adaptive algorithms, and sound governance structures are the most influential drivers of sustainable DNEs. These findings extend platform and resource-based theories by showing how learning from data, rather than network size alone, drives competitive advantage in digital ecosystems. The study contributes methodological novelty through an evidence-based Delphi–AHP framework that blends conceptual synthesis with analytical validation. Managerially, it offers a prioritised roadmap for platform leaders to invest in data governance, algorithmic transparency, and organisational readiness for AI-enabled growth.

Keywords

Data Network Effects, Digital Platforms, Artificial Intelligence, Algorithmic Capability, Analytic Hierarchy Process, Platform Governance.

Introduction

Digital platforms have disrupted the global business landscape, with firms like Amazon, Uber, and YouTube pioneering entirely new business models. These platforms create value by brokering interactions and exchanges between two or more groups of stakeholders (Acs et al., 2021). This revolution has led to a long line of studies discussing the functionality of digital platforms, their development, and their competitive edge maintenance. In recent decades, researchers have mostly concentrated on the ecosystem processes of the end-users, complementors and platform providers (Rietveld & Schilling, 2021).

The academic literature on platforms has been evolving in many manner over the past three decades. Before the turn of the century the first work on platform was founded on the economic theory of network externalities and two-sided market pricing, and winner-take-all (Katz & Shapiro, 1994). The next attention was on the strategic management of the digital platforms and their role in the information systems. The scholars talked about direct and indirect network effects, how the platform is launched, and how it competes with stakeholders (Trusov et al., 2009). In addition, the field of the research was extended to encompass architectural and governance schemes of platforms (Tiwana et al., 2010).

Platforms were also viewed as multi-faceted ecosystems or even as meta-organisations in the long run requiring an appropriate system of coordination and governance (Gulati et al., 2012). It is a sign of the cognitive development, indicating that it is a continuous investigation into the scope of the root drivers of platform value. Despite the well-studied conceptual framework of traditional network effects, the acceleration of data and artificial intelligence (AI) as the drivers of value creation is the concept that is recently beginning to be taken in a systematic manner.

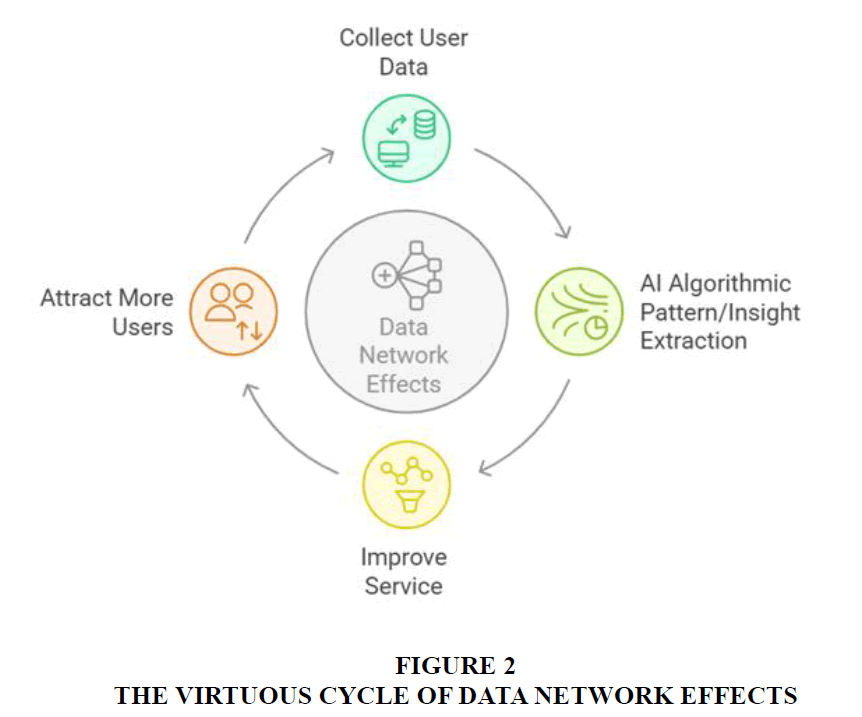

By the late 2010s, research scholars began exploring the role of artificial intelligence (AI) and machine learning (ML) in the context of digital platform ecosystems (Brynjolfsson et al., 2019). The integration of AI and ML into platform ecosystems has given rise to the theorisation of a distinct category of network effect: the data network effect (DNE) (Gregory et al., 2022). According to Gregory, Digital Network Effects (DNEs) occur when a digital platform's value increases as it gathers more user data. An increase in user data leads to better quality of services, which further attracts higher numbers of users. The new users, in turn, generate even more data, creating another loop in the positive feedback cycle. Over the past few years, the research landscape surrounding DNEs has expanded rapidly with the rise of AI and generative AI (GenAI) technologies. Recent studies show that platform value is no longer determined solely by user scale or data volume, but by how intelligently data is transformed into learning, personalisation, and predictive capabilities. Vomberg et al. (2023) highlighted how AI-driven feedback loops mitigate the “cold-start” problem in emerging digital ecosystems, while Climent et al. (2024) and Malgonde (2025) demonstrated that AI-enabled business models and generative recommender systems are redefining user engagement and retention. Knorr et al. (2025) further observed that data network effects are now shaped by multi-layered learning among algorithms, complementors, and users, moving beyond static models of network interaction.

Despite the interest in the DNE theory, the literature on DNEs remains nascent and fragmented. The DNEs lack a consistent definition, a clear set of conditions, and understanding of enablers for DNEs to trigger and help organizations achieve competitive advantage. Despite this progress, existing frameworks still underrepresent the algorithmic learning and generative mechanisms that underpin modern DNEs. Given the fragmented empirical base, this study adopts a mixed-method design combining a modified Delphi and the Analytic Hierarchy Process (AHP) to consolidate theoretical insights from recent literature and identify the critical enablers of DNE success in AI-driven platforms. Hence, we conduct our research with the following research questions and objectives:

RQ1: What is clear and comprehensive definition of DNEs for future research?

RQ2: What are the key enablers of triggering DNEs in AI platforms?

RQ3: Which are the focus areas for practitioners to realize DNEs in the digital platforms to achieve sustainable competitive advantage?

RQ4: What are emerging research directions to guide the future scope of study in the field DNEs?

The rest of the paper is organised as follows. Section 2 reviews the evolution of digital platforms, the emergence of data network effects, and the role of AI and machine learning in shaping new forms of value creation. Section 3 outlines the research design, explaining the multi-stage approach that combines a systematic literature review with Delphi-based validation and AHP-driven prioritisation. Section 4 presents the findings of the systematic review using the TCM–ADO framework and synthesises the recurring patterns that led to the identification of six core enablers of DNEs. Section 5 details the Desk/Documentary Delphi and AHP processes used to refine, validate, and rank these enablers. Section 6 discusses the theoretical and managerial implications of the prioritised enablers and integrates them into a conceptual understanding of how DNEs are formed and sustained. Section 7 concludes the paper by highlighting contributions, limitations, and directions for future research.

Theoretical Background

We begin this section by discussing the existing literature on network effects and their key characteristics, followed by a brief review of the literature on DNEs. Although the platform research is vast, we have focused our background section on relevant references to our research topic.

Network effects

Broadly, network effects can be defined as the platform’s ability to match the supply and demand of the platform most efficiently to attract a high number of users (ecosystem partners (supply) and end-users (demand)). This matching of supply and demand is studied through the lens of network effects in platform-mediated ecosystems (Cullen & Farronato, 2021).

Network effects trigger when the user value of a product or service increases with the number of its users. In the case of digital platforms, network effects are one of the critical drivers of scale, which in turn is one of the keys to market dominance. The existing research largely covers two categories of network effects – Direct Network Effects and Indirect Network Effects. Direct network effects (same-side network effects) arise when a product becomes more valuable to a user as its installed base grows. This effect is central to services in which the value to the user comes from the connections among users, such as telecom networks and social media platforms. Still, it is present in other kinds of services, including those delivered over online platforms like Amazon. The path-breaking work of Katz & Shapiro (1985) on network externalities, competition, and compatibility captures an early economic analysis of these effects and emphasises the importance of these effects, particularly in technology or information goods markets. Indirect network effects (cross-side network effects) may operate when the value of a product or service increases due to the existence of complementary goods or services (Katona et al., 2011). This is particularly relevant for two-sided digital platforms that combine different user groups, such as buyers and sellers on Amazon and eBay. (Rochet & Tirole, 2003) work on platform competition in two-sided markets articulates how these effects underpin the economics of platforms that serve distinct but interdependent user groups.

The literature also explores the strategic implications of network effects for digital platforms. (Cennamo & Santalo, 2013) discuss strategies for leveraging network effects to achieve competitive advantage, such as platform envelopment, where a platform expands its services to encompass complementary markets. Furthermore, early growth and securing a threshold mass of users is a recurring theme, as initial user acquisition can significantly influence a platform's ability to benefit from network effects (Rysman, 2009). The early user acquisition in multi-sided platforms depends upon the availability of quality products and services on the platforms from the supply side, leading to the well-known chicken-and-egg problem. The suppliers will attrit if the platform provider acquires the supply first and cannot attract enough users. Similarly, if there is not enough supply of quality products and services on the platform, users will leave the platform (Veisdal, 2020).

DNEs

Recently, a new category of network effects has been theorised and termed data network effects (DNEs) (Gregory et al., 2022). As per this research, data network effects are triggered when the value to a user increases as the platform learns (using AI/ML) from the data it collects from users on the platform, triggering a positive virtuous loop. For example, the more that Netflix learns about its users from the data it collects whenever the users watch any content, the more it can personalise the content it recommends to the end users in line with their preferences, enhancing the end-user experience and creating user value. This research proposes that the platform’s AI/ML capability, i.e., the ability of a platform to improve through data-driven learning, triggers a new type of platform externalities, where a user’s experience and utility from the platform are correlated to data-driven improvements.

The key distinction between the traditional network effects and DNEs is that the DNEs stem from the AI capabilities of the platform to learn from data and create more personalised services for the users (Haftor et al., 2021; Vomberg et al., 2023). However, the traditional network stems from the size of the supply side and the interaction between the demand side users, enhancing the platform’s value for more users on both the demand side and the supply side. The other asymmetry is in the premises that traditional conceptualization of network effects is based on economic theory, whereas digital network effects (DNEs) is based on the inherent technical capacity of the platform (Gregory et al., 2021).

Moreover, although the traditional network effects rely on the overall user base of a product or service, DNEs arise when the value creation is augmented with the informational outputs created by users (Haftor et al., 2021). DNEs are especially relevant to digital platforms, since the service can be improved over time, by basing it on data-driven insights, thus creating more complex algorithms and better user experience.

Research Methodology

The design of our research adopts a mixed-method multi-stage approach which combines systematic evidence with expert validation and strict analytical prioritisation. It seeks to review the concept of Data Network Effects (DNEs) in a holistic manner and develop a robust framework that is actionable and can identify the important enablers of the DNEs in AI-powered platform ecosystems.

The research progressively moves in three consecutive steps

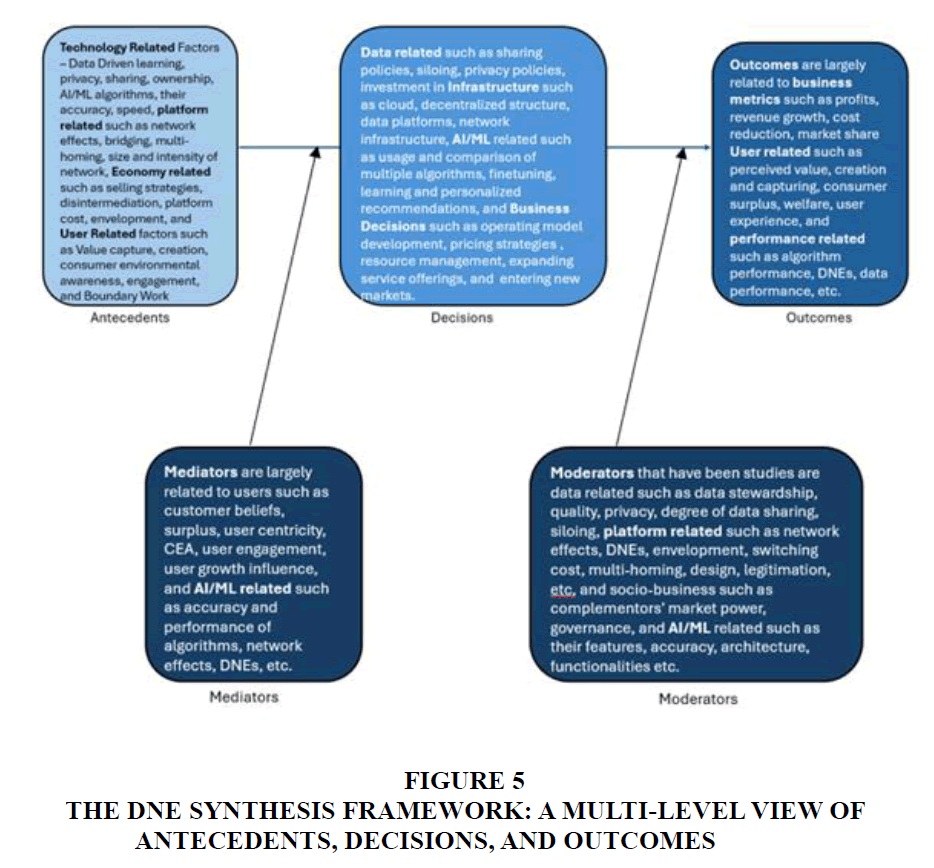

Systematic Literature Review – TCM-ADO Approach

Stage 1 involves a methodological literature review of 55 high-quality articles retrieved from A, A*, and FT50 journals and major conferences. We adopted the TCM-ADO framework as it is a widely accepted and recognised literature review methodology that enables a systematic and comprehensive analysis of existing research (Pushparaj & Kushwaha, 2024). The Theory-Context-Methodology (TCM) component directs our analysis towards the theoretical foundations used in the research, the context in which the studies were conducted, and the methodological approaches employed across the literature. The Antecedents-Decisions-Outcomes (ADO) component allows for the deeper study of causal architecture within the literature. This component focuses on variables identified in the literature, the key antecedents, the important decisions taken, and the research outcomes. ADO analysis also includes the study of mediating and moderating variables, which further enriches the study and provides a deep understanding of key variables used by researchers and gaps that could be studied. This structured analysis helped to systematically uncover the key enablers behind DNEs and also literature gaps to chart out future research agenda.

Article Selection Process

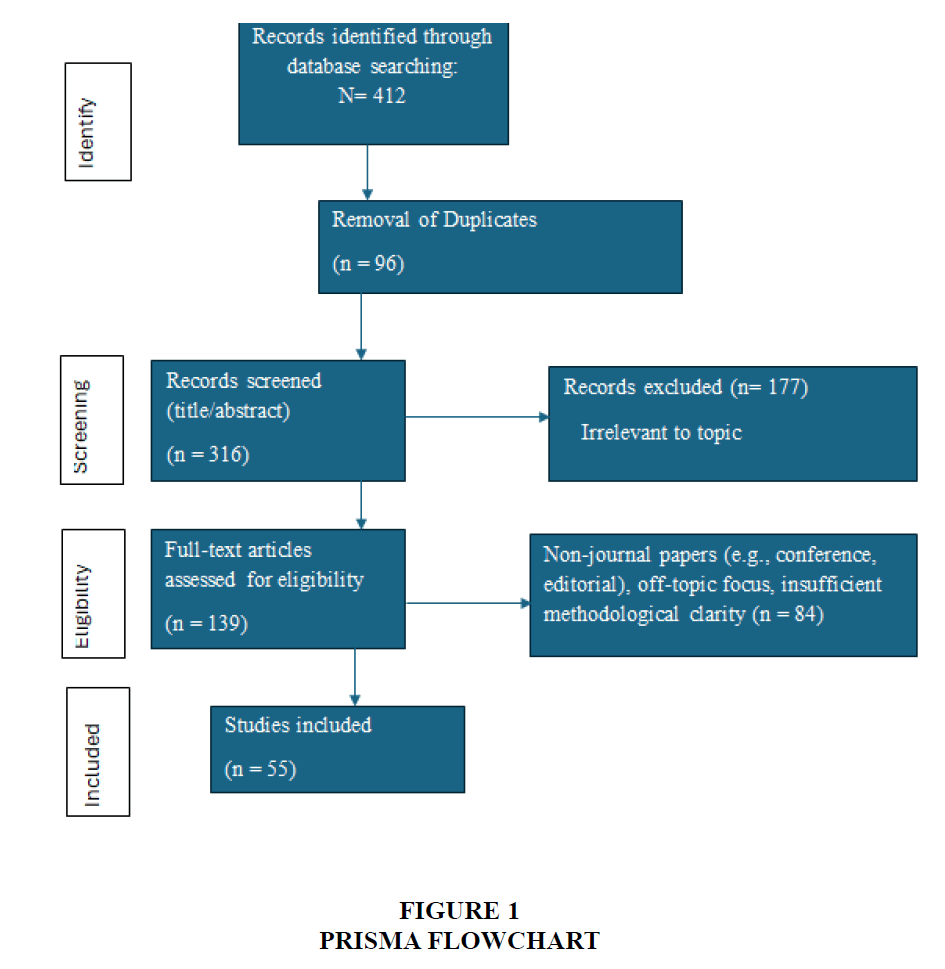

The systematic literature review followed the PRISMA 2020 guidelines for transparent evidence synthesis. We also restricted our analysis to A, A* and FT50 papers extracted from Scopus, Web of Science and top AIS conference papers to maintain quality in our analysis. The reason for including conference papers is twofold: Firstly, the theory of DNEs is contemporary and was first introduced in 2021; hence, few research papers have been published in top journals to date. Secondly, the top-ranked conferences tend to give insights into the research community's current and future trends Table 1.

| Table 1: Details of Literature Search and Filtering (Prisma Protocol) | |

| Criteria | Description |

| Source | Scopus, Web of Science |

| Years | 2019 – 2025 |

| Searching Terms/Search Strings | For Scopus, the search query was: TITLE-ABS-KEY("data network effects" OR "AI network effects" OR "machine learning network effects" OR "generative AI network effects" OR "data-driven ecosystems") AND (LIMIT-TO(SUBJAREA, "BUSI") OR LIMIT-TO(SUBJAREA, "ECON") OR LIMIT-TO(SUBJAREA, "COMP")) AND (LIMIT-TO(SRCTYPE, "j")) For Web of Science, the corresponding query was: TS=("data network effects" OR "AI network effects" OR "machine learning network effects" OR "generative AI network effects" OR "data-driven ecosystems") AND (SU=("Management" OR "Business" OR "Information Systems" OR "Operations Research")) Refined by: Document Types = (Article OR Review) AND Languages = (English) |

| Inclusion Criteria | Peer-reviewed journal or top-tier conference papers (ABDC A/A*, FT50, or equivalent); Focus on platforms, ecosystems, or data-driven feedback mechanisms; Explicit discussion of AI, ML, or generative mechanisms linked to user-data loops; Conceptual, theoretical, or empirical work with identifiable outcomes or enablers. |

| Exclusion Criteria | Purely technical AI model papers without platform or network context; Non-English publications; Duplicates or early versions of the same paper; Articles focused only on social-network diffusion unrelated to platform feedback loops. |

| Sample Size | 412 Records (Scopus = 231; WoS = 181) |

| Duplicate Removal | 316 Records |

| Title Abstract screening | 139 Records |

| Full Text Review | 55 Records |

The process is summarised in the PRISMA diagram as shown below Figure 1

Illustrative Mini-Case Analysis

Following the literature synthesis, we further conducted five mini-case analyses (Waze, Google Maps, Amazon Marketplace, Netflix, and TikTok). We also extracted enablers from our analysis of the five well known platforms to be comprehensive. The objective was to find a comprehensive list of key enablers of DNE from literature and practice and validate them using expert analysis.

Ten recurrent constructs were obtained in the synthesis, and they form the conditions, which are preparatory to the formation and strengthening of DNEs.

Desk/Documentary Delphi

Stage 2 used a modified Delphi methodology on enablers identified from TCM-ADO and case analysis to validate the key enablers of DNEs. Each of the enablers was scrutinised and evaluated by experts in terms of significance and readability, using a structured rubric. The determination of convergence was done through median and interquartile range (IQR) parameters and a second round of review further defined some of the terms where there was a need. This allowed this process to assure that the enablers were not only based on the literature but also conceptually clear, distinct and reliable. From TCM-ADO and case analysis, there were ten constructs that were repeatedly identified as enabling and reinforcing data network effects. These constructs manifested themselves in various antecedents, decision processes, and outcome processes, hence, showing that they are the key players rather than standalone variables.

However, the literature used different labels and scopes for these constructs. For example, data governance, data quality, privacy transparency, and bias control all referred to the same underlying enabler of data stewardship. Similarly, algorithmic personalisation, predictive accuracy, and model adaptability all reflected algorithmic capability. To reconcile these variations and establish conceptual clarity, a Desk/Documentary Delphi approach was applied. This method is well suited for early-stage or conceptually fragmented domains, as it derives consensus from published evidence when access to field experts is constrained (Okoli & Pawlowski, 2004; Hsu & Sandford, 2007).

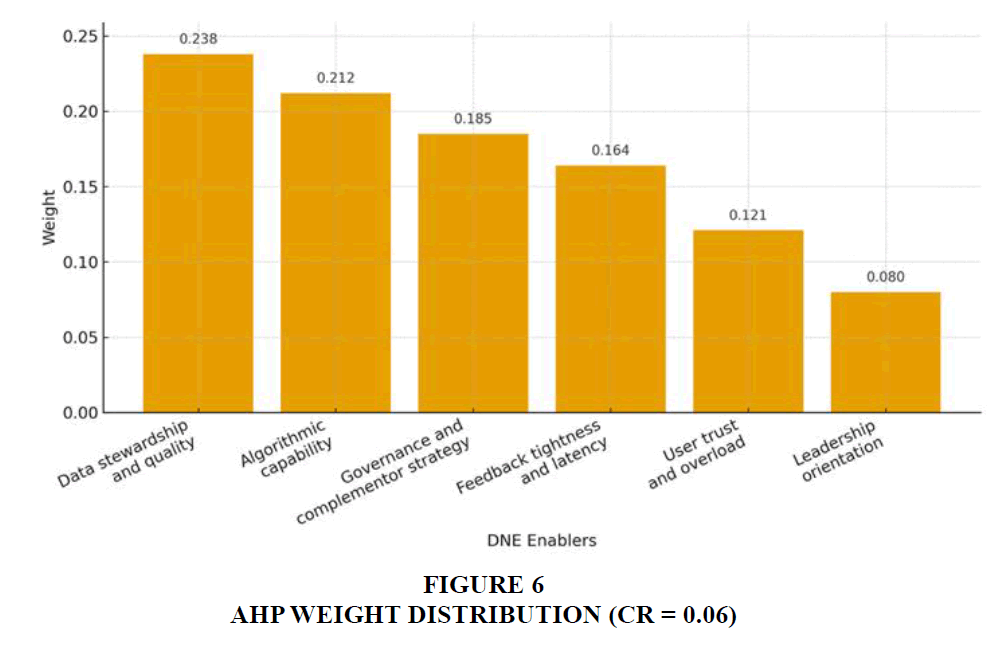

Analytic Hierarchy Process (AHP)

Stage 3 utilized the Analytic Hierarchy Process (AHP) to determine the relative significance of the validated enablers. Individual comparisons were made based on the 19-scale proposed by Saaty (2008), and the group level matrix was added together using the geometric mean technique (Forman & Peniwati, 1998). Consistency Ratio (CR) was determined to ensure logical consistency of the judgments and was kept at 0.10 level. The derived normalised weights created a ranked pyramid of DNE enablers.

Since the six validated enablers were finalised through Desk/Documentary Delphi procedure, the next goal was to determine their relative importance in strengthening Data Network Effects. In this objective, the Analytic Hierarchy Process (AHP) was used- a multi criteria decision making model which is used in making systematic pair-wise comparisons of factors and converting qualitative judgments into quantitative priority weights (Saaty, 2008).

AHP was appropriate for three reasons. First, the six enablers represent interrelated strategic capabilities that cannot be evaluated independently. Second, AHP accommodates expert reasoning and captures the intensity of preference between enablers (e.g., slightly more important vs. significantly more important). Third, it includes an internal consistency check through the Consistency Ratio (CR), which strengthens the methodological rigour compared to simple ranking or Likert scoring.

This combined research design goes beyond a descriptive review as the concept synthesis is combined with expert validation and quantitative prioritisation. It produces a systematic, evidence-based framework that is not only explanatory of the same mechanisms that are operative in DNEs but it also prioritizes the most significant enablers, thus contributing to both the theoretical knowledge and managerial practice.

The combined SLR–Delphi–AHP process resulted in a validated framework for DNE enablers, their operational indicators, and relative significance. Median, IQR, and reliability statistics from the Delphi phase, alongside AHP-derived weights, are reported in the results section. Instruments, coder rubrics, pairwise matrices, and sensitivity checks are detailed in the appendix to ensure transparency and replicability.

Two independent coders reviewed the initial enabler definitions derived from the SLR and evaluated each on importance and clarity/observability using a seven-point Likert scale, following the coder rubric (Appendix A). The first round of ratings generated median and interquartile range (IQR) values for each construct. Items with Median (Importance) ≥ 6 and IQR ≤ 1 were provisionally retained. Items with strong importance but lower clarity were reworded and refined to align terminology with how the construct was most consistently described in the literature. Items with Median (Importance) < 5 were considered insufficiently supported and removed. A second round of ratings was conducted only for modified items to confirm convergence. The six enablers emerged after the iterative approach and were a literature-tested set of six concepts, each with a defined set of empirical data and conceptual boundaries.

The inter-rater reliability was high as the rank correlation coefficient between Spearman r= 0.83 and the decision agreement at 87 percent indicated the consistency in inter-rater reliability as well as the reliability of the ensemble. These six enablers were then used as inputs into the Analytic Hierarchy Process (AHP) to determine their relative priority in improving Data Network Effects as proposed in Section 3.3.

The hierarchy for this study consisted of two levels. Level 1 was the overall goal: to prioritise the enablers that most strongly drive data network effects in AI-enabled platform ecosystems. Level 2 comprised the six Delphi-validated enablers: (1) data stewardship and quality, (2) algorithmic capability, (3) governance and complementor strategy, (4) feedback tightness and latency, (5) user trust and overload, and (6) leadership orientation.

Pairwise comparisons were conducted for every possible pair of enablers using Saaty’s 1–9 scale. Two independent coders (the same coders as in the Delphi stage) provided their judgments based on both the literature synthesis and their domain understanding. Each coder completed a full 6 × 6 comparison matrix. The matrices were then aggregated using the geometric mean method, as recommended by (Forman & Peniwati, 1998) to produce a group-level comparison matrix. This matrix was used to compute the principal eigenvector, which yields the normalised weights for each enabler.

To ensure logical consistency in the comparative judgments, the Consistency Ratio (CR) was calculated. Following Saaty’s (1994) guideline, a CR ≤ 0.10 indicates acceptable consistency. Our aggregated matrix achieved CR = 0.06, confirming that the pairwise comparisons were coherent and methodologically sound.

The resulting weight vector reflects the relative influence of each enabler in driving DNEs and forms the basis for the findings reported in Section 5. This quantitative prioritisation adds analytical depth to the literature-driven Delphi results and allows the study to move beyond listing factors toward establishing a structured hierarchy of what matters most in sustaining data network effects.

Systematic Literature Review

This section discusses the literature review analysis and findings. We start by first analysing the definition of DNEs in the selected corpus of articles to crystallise the understanding of data network effects. Then we move to TCM-ADO analysis, which strengthens our understanding of the research conducted on DNEs in a comprehensive and structured manner.

Defining DNEs

We start our analysis by synthesising the definition of DNEs in the shortlisted papers. This provides a comprehensive view of how different authors have conceptualised data network effects, emphasising the role of data, machine learning, and AI in enhancing the value for different stakeholders of digital platforms Table 2.

| Table 2 Definition of DNES | |||

| Title | Authors | Journal | Definition of DNE |

| The role of artificial intelligence and data network effects for creating user value | Robert Wayne Gregory, Ola Henfridsson, Evgeny Kaganer and Harris Kyriakou | Academy of Management Review | Data network effects are exhibited when a platform becomes more valuable to each user as it identifies patterns from the data it collects on users, thus improving the platform's offerings through AI and machine learning. |

| Is smart the new green? The impact of consumer environmental awareness and the data network effect | Yugang Yu, Xin Zhang, Xiong Zhang, Wei T Yue | Information Technology and People | Data network effects are a phenomenon whereby a product's offering improves with the accumulation and analysis of data, providing actionable insights and offering increased value and a better user experience. While traditional network effects rest on product compatibility and installed base, data network effects highlight the role of data as a value and satisfaction enhancer. |

| The Value of Data and Its Impact on Competition | Marco Iansiti | Harvard Business School Working Paper | Data network effects arise when data on the platform creates a positive feedback loop: the more quality data is obtained, the finer the improvements in product development are, which attracts more users to the product and, hence, generates yet more data. Hence, the cycle may result in increased revenues and market share for the incumbent firms. The data network effect depends on data quality, data volume, and data governance. |

| Why Some Platforms Thrive and Others Don't | Marco Iansiti, Karim R Lakhani, Ming Zeng | Harvard Business School | Data Network Effects occur when the value for platform users gets enhanced as more data is generated and eventually used within the network. DNEs can differ in structure and intensity according to how well the platform handles and exploits user-generated data. |

| From platform growth to platform scaling: The role of decision rules and network effects over time | Suzana Varga, Magdalena Cholakova, Justin J P Jansen, Tom J M Mom, Guus J M Kok | Journal of Business Venturing | Data network effects essentially form a new category of network effects, derived from greater platform value owing to immense quantities of data available due to an increasing installed base. These effects arise through sophisticated AI/ML programs that collect, analyse, and make clever use of data generated by users to narrowly improve platform offerings, improve user experiences, and increase the value users receive. Thus, these data network effects complement the traditional network effects present in a platform by unlocking additional opportunities, targeting potential user communities, strategically shaping supply and demand balance, and creating extra value for platforms to capture. |

| Regulating Algorithmic Learning in Digital Platform Ecosystems through Data Sharing and Data Siloing: Consequences for Innovation and Welfare Completed Research Paper | Jan Krämer, Shiva Shekhar, Janina Hofmann | International Conference on Information Systems | Data-driven network effects belong to the other type of a learning cycle: Data-driven activities and consumption generate more data meant for algorithmic learning and data analytics to further improve the service, which increases the demand and produces even more data thereupon. |

| How machine learning activates data network effects in business models: Theory advancement through an industrial case of promoting ecological sustainability | Darek M Haftor, Ricardo Costa Climent, Jenny Eriksson Lundström | Journal of Business Research | Data Network Effects (DNE) refer to the phenomenon where the value for the users of a platform gets enhanced as it learns from the data it collects from its users. This learning is achieved by machine learning techniques that analyse large volumes of data to extract patterns and perform predictions. The more data a platform collects, the better it can improve its offerings, which in turn attracts more users, creating a positive virtuous loop. Unlike traditional network effects that depend on the number of users, DNE focuses on the scale and quality of data-driven learning and improvements. |

| A pathway to bypassing market entry barriers from data network effects: A case study of a start-up's use of machine learning | Darek M Haftor, Ricardo Costa-Climent, Samuel Ribeiro Navarrete | Journal of Business Research | Data Network Effects (DNE) refer to a self-reinforcing loop where data is continuously gathered and analysed to improve predictions and recommendations. This process locks in existing users and attracts new ones, creating a barrier for competitors. Unlike conventional network effects that depend on network size, DNE depends on the scale of learning from data. The process involves collecting user data, analysing it to detect patterns, making predictions, and using the outcomes to refine the data patterns, thereby continuously improving the service. |

| Data-enabled learning, network effects, and competitive advantage | Andrei Hagiu, Julian Wright, Luis Cabral, Emilio Calvano, Jacques Cremer, Bruno Jullien, Byung-Cheol Kim, Martin Peitz | The RAND Journal of Economics | Data Network Effects (DNE) refer to the phenomenon where the value of a product or service improves as more consumers use it, driven by data-enabled learning. This involves firms improving their products based on data collected from users, which in turn attracts more users and more data, creating a self-reinforcing cycle. Consumer beliefs about the number of other users also play a crucial role in determining the product's value. |

Across the corpus, DNEs are consistently described as a four-part learning loop: (1) interaction-generated data inputs; (2) a learning engine (AI/ML/GenAI) extracting patterns; (3) value improvements surfaced back to users (e.g., relevance, quality, latency, cost); and (4) a virtuous feedback cycle where better service stimulates more use and thus more data (Gregory, Henfridsson, Kaganer, & Kyriakou, 2021; Hagiu & Wright, 2023; Berman & Katona, 2019). Two features are salient. First, unlike classic network effects that scale mainly with user counts (Katz & Shapiro, 1994; Trusov, Bucklin, & Pauwels, 2009), DNE intensity scales with learning quality (data richness/timeliness and model efficacy). Second, contemporary platform work shows how Generative AI tightens feedback cycles, changes complementor roles, and raises governance demands around exposure, noise, bias, and overload (Loebbecke, Obeng-Antwi, Boboschko, & Cremer, 2024; Malgonde, 2025; Sola, Qureshi, & Khawaja, 2024; Qiu, Zhang, You, & Zhao, 2025).

Synthesised definition

Data Network Effects (DNEs) are self-reinforcing feedback cycles in which a platform’s value to each user increases through data-driven learning. Rather than value rising primarily with network size, DNEs strengthen when platforms convert interaction data into visible service improvements, which in turn stimulate further use and data generation, compounding learning and advantage (Gregory et al., 2021; Hagiu & Wright, 2023) Figure 2.

DNEs are dependent on the scale of learning from data they collect from the user base through machine learning and artificial intelligence (Haftor et al., 2023). One can say that the platform will be able to collect more data as the number of users grows; hence, its learning from the data is correlated to the size of the network. However, with DNEs, it is more about the learnings from the data generated through a number of interactions rather than the number of stakeholders.

TCM–ADO synthesis of the 55 studies

This section synthesises 55 high-quality studies on data network effects (DNEs) using the Theory–Context–Methodology (TCM) and Antecedents–Decisions–Outcomes (ADO) frameworks. The goal is to understand how researchers explain DNE mechanisms and to identify the recurring constructs that ultimately form the key enablers we later validate through Delphi and prioritise using AHP. The synthesis shows not only what is known, but also how the field converges around specific conditions that strengthen DNEs in AI-enabled platforms.

Theory

Theories play a crucial role in advancing research by creating a base on which researchers build and validate their findings by adding new variables, integrating existing variables or adopting new methodologies to validate the findings of the old theories (Tranfield et al., 2003). Any new theory or research must have a solid theoretical foundation, without which it loses its credibility and acceptance in the research community. Our set of 55 handpicked papers is firmly grounded in terms of the theoretical grounding of research across different disciplines – Strategy, Management, Economics, Information Systems, Psychology, Operations, and Sustainability.

Across the corpus, the theoretical foundations of DNEs have evolved significantly. Early work grounded platform research in two-sided markets, network externalities, and pricing strategies, where value grew with user numbers. More recent literature moves beyond scale to explain how data and machine learning create new positive feedback loops (Brynjolfsson et al., 2019; Gregory et al., 2021; Hagiu & Wright, 2023).

The Network Effects Theory and Platform Theory are still central to the constructs (Gregory et al., 2021; Ens et al., 2023), often combined with the data-enabled learning or artificial intelligence capability perspectives (Haftor et al., 2021; Jiang et al., 2025). According to the theories of governance and architecture, the control of a platform, openness, and complementor strategies play a central role in data flow and value capture (Tiwana et al., 2010; Zeng et al., 2019; Wallbach et al., 2019; Knorr et al., 2025).

As can be seen, the different viewpoints intersect: Digital Networked Enterprises rely on the mechanism of how platforms control data, design algorithms, encourage ecosystem actors to participate and participate in the learning cycles. Data stewardship, algorithmic capability, and governance are identified as key theoretical sources in these results. However, we did not find any study that connects data network effects with the platform architecture theory by Tiwana et al. (2010). This research gap is worth studying as it would be worth investigating how AI as a technology amalgamates with the modular and open architecture of digital platforms and what the platform's architectural impact is on DNEs.

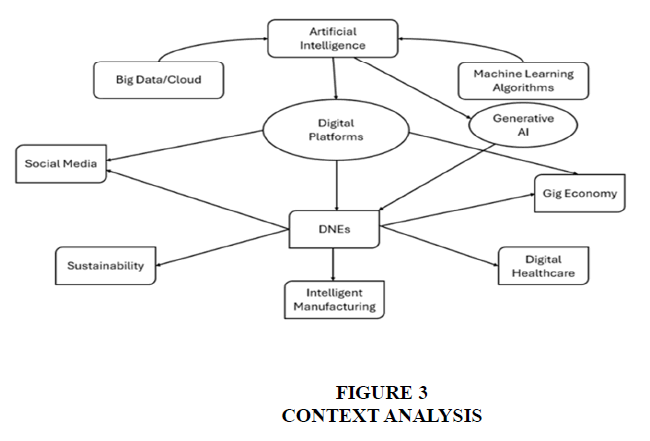

Context

Although the mechanisms of DNEs have been widely studied in the context of heterogeneous fields, the level of similarity is astonishingly high.

The significance of personalization and loops of discovery is supported by consumer-centered platforms, such as social media, streaming, and creator ecosystems (Berman and Katona, 2019; Gala et al., 2024; the TikTok, and Netflix example). The operational and supply-chain platforms focus on the data aggregation, predictive decision-making, and performance feedback systems (Song et al., 2024; Tukiainen and Paavola, 2022; Liu et al., 2023). Adaptive content delivery and design-thinking frameworks are being forecasted on educational and service-oriented platforms (Wang et al., 2024; Jiang et al., 2025). Marketplace and the gig economy are studied through the prism of multi-sided incentives and competition (Hagiu and Wright, 2023; Mardan & Tremblay, 2025).

The same processes are repeated across these diverse environments: the accumulation of trustworthy information in a systematic manner, the algorithmic process of learning, the process of restoring value to the participants and the process of reinforcing the involvement of the participants. This cross-contextual, ubiquitous veracity confirms that DNE enablers have structural rather than domain specific attributes Figure 3.

Methodology

Methodological diversity strengthens the empirical base. Conceptual and theoretical papers articulate the logic and boundaries of DNEs (Gregory et al., 2021; Clough & Wu, 2022; Gregory et al., 2022). Case studies demonstrate how platforms implement data pipelines, feedback loops, and governance strategies (Tukiainen & Paavola, 2022; Kandaurova & Skog, 2024; Haftor et al., 2021). Econometric and algorithmic models quantify effects and optimise interventions (Sanchez-Cartas & Katsamakas, 2024; Song et al., 2024; Yu et al., 2021; Hagiu & Wright, 2023; Demirci et al., 2025).

While the field is still maturing, the combination of conceptual development and empirical evidence justifies a structured consolidation of findings. This synthesis therefore moves from descriptive literature review to analytical extraction of core enablers. However, we do see a future scope of performing and quantifying DNEs through empirical and quantitative research methodologies as more and more firms deploy AI and ML in their platforms.

Antecedents

Antecedents are essentially the independent variables of the research, which can be the events, actions, and trigger variables that put into motion the decision-making process and influence the outcomes and dependent variables. Antecedents represent the conditions that enable DNE formation. Across studies, seven recurring antecedent clusters emerged:

1. Data quality and governance: accuracy, timeliness, bias control, access, privacy, stewardship (Gregory et al., 2021; Yu et al., 2021; Hammond-Errey, 2024; Iansiti, 2021).

2. AI/ML capability: personalisation, model adaptability, explainability, update cadence (Hagiu & Wright, 2023; Wang et al., 2024; Jiang et al., 2025).

3. Platform governance and complementor management: data-sharing policies, multihoming rules, incentives (Zeng et al., 2019; Wallbach et al., 2019; Knorr et al., 2025; Ens et al., 2023).

4. Feedback speed and visibility: time between interaction, learning, and user-facing improvement (Malgonde, 2025; Loebbecke et al., 2024; Kandaurova & Skog, 2024).

5. User trust and cognitive capacity: privacy, perceived fairness, overload, anxiety (Qiu et al., 2025; Yeon et al., 2025; Fang & Jiang, 2024).

6. Leadership and strategic orientation: top management AI literacy, resource commitment, governance support (Pinski et al., 2024; Climent et al., 2024).

7. User Centric Design: Platform user experience shall transmit the benefits of data driven learning to the users in a seamless manner (Gregory et al., 2021).

These became the raw material for the enabler list.

Decisions

Decisions in the literature show how antecedents are operationalised through actions taken by various platform stakeholders. The antecedents may lead to some experiments, modelling, and algorithm implementation to generate the outcomes for the research. These interventions or actions are called decisions in the ADO analysis. In our study, we found 34 unique decisions triggered by the antecedents' actions. Platforms invest in data infrastructure, labelling, and transparency to improve data stewardship (Yu et al., 2021; Hammond-Errey, 2024). They embed AI-driven matching, recommendation, or prediction models to enhance algorithmic capability (Wang et al., 2024; Jiang et al., 2025). They design rules for complementors and control multihoming to manage value leakage (Knorr et al., 2025; Zeng et al., 2019). They reduce feedback latency through real-time updates and interface changes (Malgonde, 2025; Loebbecke et al., 2024). They implement trust-building measures to reduce user resistance (Qiu et al., 2025; Li et al., 2025). Finally, leadership sets strategy and allocates resources to sustain data and AI initiatives (Pinski et al., 2024; Climent et al., 2024).

These decisions map directly to how the six enablers function in practice.

Outcomes

Outcomes of DNEs across studies fall into four clusters:

• Business performance and growth: revenue, market share, creator performance, entry into new segments (Haftor et al., 2021; Vomberg et al., 2023; Gala et al., 2024).

• User value: experience, engagement, loyalty, perceived assistance (Wang et al., 2024; Yeon et al., 2025; Song et al., 2024).

• Technical performance: model accuracy, platform efficiency, prediction quality (Song et al., 2021; Deng et al., 2025).

• Ecosystem health: complementor success, spillover control, multi-sided welfare (Hagiu & Wright, 2023; Knorr et al., 2025; Zeng et al., 2019).

These outcomes confirm that DNEs create advantage only when antecedents and decisions are in place reinforcing the need to identify key enablers.

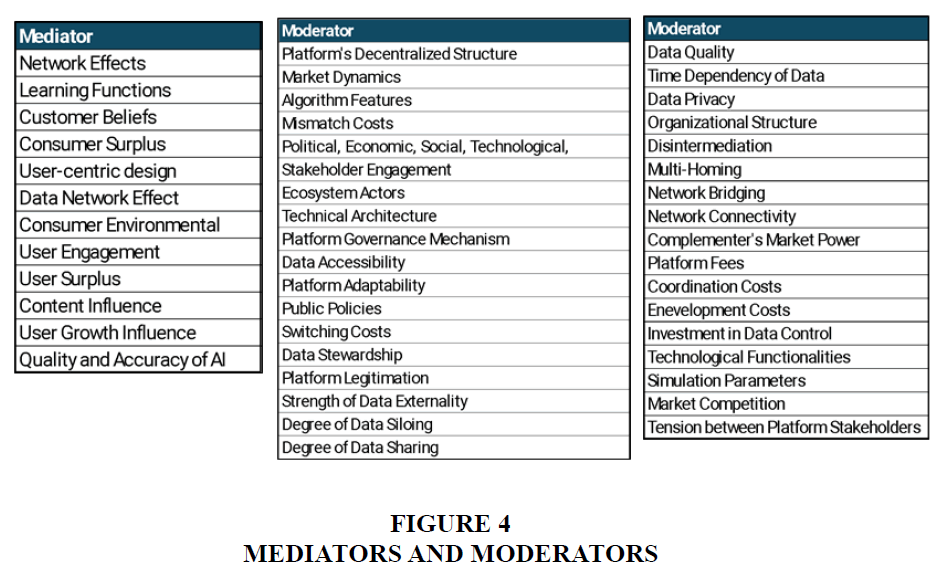

Moderators and Mediators

Our analysis reveals that the relationship between Data Network Effects and platform outcomes is not direct but is shaped by a complex interplay of mediating and moderating variables. Mediating and moderating variables are critical in explaining the link between the antecedents and the outcome variables. The difference between mediators and moderators is that moderators also affect the direction and strength of the relationship between the antecedents and the outcome variables. We identified 12 mediating and 35 moderating variables through our analysis, as shown in Figure 4.

The literature identifies mediating variables that explain how DNEs create value, falling into two main categories: user-centric factors (such as user engagement and beliefs) (Vomberg et al., 2023; Hagiu & Wright, 2023; Berman & Kotana, 2019; Yu et al., 2021) and platform-centric mechanisms (like design, quality of data and algorithms) (Sanchez-Cartas & Katsamkas, 2024; Yu et al., 2021; Vomberg et al., 2023; Mayer et al., 2024). More prevalent are the moderating variables, which influence the strength and direction of this value creation. These moderators can be grouped into four key areas: the characteristics of the data itself (e.g., quality, accessibility, privacy) (Kandaurova & Skog, 2024; Hagiu & Wright, 2023; Gregory et al., 2021; Haftor et al., 2021; Lansiti, 2021), the platform's technical aspects (Clough & Wu, 2022; Sanchez-Cartas & Katsamkas, 2024; Gregory et al., 2021; Alaimo et al., 2019; Varga et al., 2023), its governance and network structure (e.g., switching costs, multi-homing) (Kandaurova & Skog, 2024; Hagiu & Wright, 2023; Haftor et al., 2021; Gregory et al., 2021; Berman & Kotana; 2019; Haftor et al., 2023), and broader macro-environmental factors (like market dynamics and public policy) (Sanchez-Cartas & Katsamkas, 2024; Tukiainen & Paavola, 2022; Hagiu & Wright, 2023; Yu et al, 2021; Zhu & Lansiti, 2019; Varga et al., 2023). Together, these variables show that the success of DNEs is highly dependent on a firm's strategic choices and its surrounding context.

Synthesising the Research on DNEs – A Conceptual Framework

To consolidate the current state of research on Data Network Effects (DNEs) and provide direction for future scholarly inquiry, this study presents the DNE Synthesis Framework: A Multi-Level View of Antecedents, Decisions, and Outcomes (see Figure 5). This integrative model is constructed on a systematic review of the literature, amalgamating the important variables into their causal roles. It captures the dynamic and multidimensional nature of the process of how DNE add value and contributes with concrete outputs. The framework outlines the Antecedents underlying the production of DNEs, the Decisions that firms will need to make to capitalise on them, and the Outcomes that will reflect their consequences.

As per our framework and analysis, the value creation from DNEs is a complex, multi-level strategic problem. The antecedents identified in extant research indicate that DNEs' success depends on a combination of technological factors (e.g., the accuracy of AI/ML algorithms) (Akter et al., 2022), platform-specific features (e.g., network presence), and user-generated inputs. Firms, however, need to commit to certain actions in terms of data governance policies (Jin & Wagman, 2021), infrastructure investment, and business strategy in order to make effective use of these enablers. Finally, the effects of DNEs are detected in various business, user and performance-level effects. These outcomes of these decisions depend on several interrelated conditions that include the level of user engagement, the qualities of algorithms and are further moderated by contextual variables such as the quality of the data, the systems of governance on the platform and the current market conditions (Haftor et al., 2021; 2023). Our framework, through this structured analysis, throws light on key gaps in the extant research, paving the way for future research on DNEs Figure 5.

DNE in Practice: Illustrative Mini-Cases Analysis

To bridge the gap between theoretical synthesis and actual implementation, we analysed the actuation of Data Network Effects on five prominent digital platforms: Waze, Google Maps, Netflix, Amazon, and TikTok. The objective was to correlate, contrast and uncover the additional enablers of the success of DNEs on AI platforms.

Case Study: Waze - The Real-Time Data Engine

Waze, the GPS navigation platform acquired by Google, offers a canonical example of a powerful Data Network Effect (DNE) built on a foundation of real-time, crowdsourced data. Unlike traditional navigation systems, Waze’s core value proposition—its ability to provide dynamic traffic updates and route optimisations—is a direct product of the data contributed by its active user base.

The platform's DNE is activated through a rapid, self-reinforcing virtuous cycle powered by a hybrid data acquisition model. Each active user's device passively contributes anonymised GPS data, forming a baseline of traffic flow. This is powerfully augmented by active, user-generated reports on incidents like traffic jams, accidents, or police presence. This massive, dual stream of data is aggregated and analysed by Waze's algorithms in real time1. This process masterfully fulfils two critical conditions for a sustainable DNE: insights generated from one user's data immediately benefit the entire community (cross-user learning), and these benefits are delivered instantly to current users as optimised routes, not merely as features in future product updates (real-time improvement) (Clough & Wu, 2022; Gregory et al., 2021). The improved service, offering tangible time-saving benefits, in turn attracts more users, thereby enriching the real-time data asset and making the map ever more accurate.

Waze’s competitive advantage is derived from the unique, real-time nature of its DNE, which contrasts sharply with the "stock of knowledge" DNEs found in other platforms like Netflix. For Waze, the value of the data asset diminishes almost instantly; a traffic jam from an hour ago is largely worthless. This strategic focus on data flow over data stock creates a powerful form of network lock-in and an exceptionally high barrier to entry. A competitor cannot simply acquire a static dataset; they must achieve a critical mass of co-located, concurrent users to provide a service of similar real-time quality, a notoriously difficult threshold to reach.

Case Study: Google Maps – The Comprehensive Data Integrator

While Waze exemplifies a DNE built on real-time user-generated velocity, Google Maps demonstrates a more comprehensive and multi-faceted approach, built on the unparalleled breadth and integration of its data assets. Its competitive advantage stems not from a single feedback loop, but from weaving together multiple data streams—including passive user location data, satellite imagery, business information, and municipal data—into a single, unified service (Tamiminia et al., 2020).

Google Maps' virtuous cycle is robust, though it operates with a different cadence than Waze's. The platform passively collects anonymised location data from millions of Android users, creating a vast baseline for traffic analysis. This is augmented by active user contributions, such as business reviews and route usage, which are integrated to enhance service quality. This multifaceted data collection enables powerful cross-user learning; improvements in one domain, such as updated transit schedules from a municipal authority, benefit all users planning a commute (Sheppard & Cizek, 2009). While its user-reported incident system is less central than Waze's, Google Maps still satisfies the condition of real-time improvement by dynamically adjusting route estimates based on aggregated, live traffic patterns, even if it is more accurately described as "near real-time" (Santos et al., 2011). The resulting comprehensive and reliable service attracts more users, further deepening the data asset across all integrated domains.

Google Maps' DNE is a powerful example of the "data integration" advantage. Unlike platforms reliant on a single type of data, Google creates value by combining disparate datasets, where the whole becomes greater than the sum of its parts. This creates an almost insurmountable barrier to entry, as a competitor would need to replicate not just one data feedback loop, but an entire ecosystem of interconnected data sources2. However, this centralised power also represents its greatest vulnerability, exposing the tension between user value creation and platform value capture and making it a focal point for regulatory scrutiny over privacy and monopolistic behaviour.

Case Study: Netflix - Personalisation and Its Limits

Netflix, the global streaming leader, provides a classic example of a Data Network Effect built on a vast "stock of knowledge." Unlike the real-time data flow that powers Waze, Netflix's competitive advantage is derived from its massive, accumulating archive of user viewing habits, which it uses to power a sophisticated personalisation engine (Walker et al., 2017).

The platform's virtuous cycle begins as subscribers engage with its content library, generating a rich dataset of viewing history, ratings, search queries, and even subtle interactions like pausing or re-watching scenes. Netflix's renowned recommendation algorithms then apply AI/ML to this data, fulfilling the condition of cross-user learning by using insights from one user's tastes to refine suggestions for others with similar profiles (Gregory et al., 2021). The service is then enhanced in near real-time as these algorithmic suggestions dynamically adapt to a user's evolving preferences, improving content discovery and increasing engagement (Gaw, 2021). This personalised experience drives user retention and attracts new subscribers, who in turn contribute their data, further deepening the "stock of knowledge" and strengthening the cycle3.

The efficacy of this DNE is contingent upon Netflix's execution across the three foundational dimensions. Its User-Centric Design is core to the data collection process; a seamless, multi-device interface encourages binge-watching and frequent interaction, ensuring a continuous flow of data inputs (Min & Lee, 2019). This data asset is managed through robust Data Stewardship, where advanced analytics and data governance ensure the information is clean, secure, and effectively utilised for both recommendations and content acquisition strategies4. Finally, the entire model rests on Platform Legitimation; Netflix must continually navigate diverse global regulations and maintain user trust regarding how their personal viewing data is handled (Rahman et al., 2024).

Netflix's DNE, while powerful, is fundamentally different and arguably more vulnerable than that of platforms like Waze or Google. Its value is a powerful complement to its primary strategic asset: its library of proprietary content. The data creates a significant personalisation moat, but it is contingent on having desirable content to collect data on in the first place. Furthermore, this DNE is susceptible to "algorithmic fatigue," where over-personalisation can trap users in filter bubbles, potentially limiting content discovery and long-term engagement (Yang et al., 2024). This highlights a critical insight: for content-based platforms, a data network effect is a formidable competitive weapon, but it must be perpetually fuelled by fresh, compelling content to remain effective.

Case Study: Amazon Marketplace- The Commercial Optimisation Engine

Amazon Marketplace presents a powerful hybrid model where traditional indirect network effects (more buyers attracting more sellers, and vice versa) are supercharged by a deeply integrated Data Network Effect. While the variety of sellers provides selection, it is the data generated from millions of daily transactions that enables Amazon to optimise the entire commercial process, from product discovery to fulfilment.

The platform's virtuous cycle is a masterclass in leveraging transactional data. As users browse, search, and purchase, they generate a massive dataset that fuels Amazon’s recommendation and personalisation engines. This directly fulfils the condition of cross-user learning, as the purchasing patterns of one cohort are used to generate relevant suggestions (e.g., "Customers who bought this also bought...") for others, significantly enhancing product discovery (Jacobides et al., 2024). Furthermore, Amazon applies this data for real-time improvement not just in recommendations, but also in dynamic pricing, advertising placement, and inventory management, thereby optimising the platform’s operational efficiency (Seyi-Lande et al., 2024). A more efficient and personalised marketplace attracts more buyers and retains sellers, which in turn generates more transactional data, creating a formidable self-reinforcing loop.

The sustainability of this cycle is underpinned by Amazon's execution on the three foundational DNE dimensions. Its User-Centric Design focuses on reducing friction in data generation; features like one-click purchasing, customer reviews, and wish lists make the act of providing valuable data a seamless part of the shopping experience (Tongxiao et al., 2011). This torrent of data is managed through world-class Data Stewardship, where advanced analytics are used not only for personalisation but also for critical functions like supply chain optimisation and fraud detection (Akter & Wamba, 2016). This entire ecosystem is built on a foundation of Platform Legitimation, which Amazon cultivates through robust seller verification, transparent review systems, and customer-centric policies like the A-to-Z Guarantee, all designed to foster the trust necessary for participation (Dunn, 2020).

Amazon’s DNE is unique because its primary function is the optimisation of the entire commercial engine. Unlike Netflix, which optimises content discovery, or Waze, which optimises navigation, Amazon uses data to enhance every step of the value chain: search, recommendation, pricing, inventory, and logistics. This creates a multi-layered competitive moat that is extraordinarily difficult for rivals to assail. A competitor must not only achieve a critical mass of buyers and sellers but also replicate the sophisticated data infrastructure required to optimise the entire commerce experience at scale. However, this unmatched data aggregation also makes Amazon a prime target for regulatory scrutiny regarding competition and data privacy.

Case Study: TikTok- Viral Content Amplification

TikTok, the short-video platform that has redefined social media, represents arguably one of the most potent and rapidly iterating Data Network Effects in the digital economy. Its "For You" feed is a masterclass in algorithmic curation, where the platform's value is derived almost entirely from its ability to learn from user interactions and predict what content will maximise engagement (Yu, 2019).

The platform’s virtuous cycle operates with unparalleled speed. As users interact with content through watch time, likes, shares, and even subtle cues like re-watches, the algorithm gathers data that immediately refines the content feed. This system perfectly embodies the core conditions of a DNE: it facilitates powerful cross-user learning, where the viewing patterns of one user cohort inform the recommendations for another, and delivers real-time improvement, as the "For You" feed adapts almost instantly to a user's shifting interests. This hyper-personalised experience drives immense engagement, which in turn provides a massive and continuous stream of data, allowing the algorithm to learn and improve at an exponential rate.

The success of this powerful engine is built upon a foundation of deliberate design choices. TikTok's User-Centric Design is optimized for frictionless data generation; the endless scroll and simple interaction tools encourage passive consumption and active creation, ensuring a constant data deluge (Wang et al., 2024). The platform's Data Stewardship involves managing these colossal data streams to feed its personalisation algorithms, although this practice is at the heart of its privacy controversies. It is on the dimension of Platform Legitimation that TikTok faces its greatest challenge. While it enforces Community Guidelines, the platform is under constant and intense scrutiny regarding its data privacy practices, algorithmic transparency, and geopolitical ties, creating significant regulatory and reputational risks (Gerrard & Thornham, 2024; Zuboff, 2019).

TikTok’s DNE is an "engagement optimisation" machine. Its competitive advantage lies in the sheer efficiency and speed of its data-to-value cycle, which is so effective that it can create viral stars and trends overnight. However, this strength is also its greatest vulnerability. The model's relentless optimisation for engagement raises significant ethical concerns about addictive design and the potential for algorithmic manipulation. Furthermore, its massive data collection and opaque algorithm make it a focal point for geopolitical conflict, demonstrating that a powerful DNE can become a strategic liability when it clashes with national security and privacy concerns.

Extraction of the Key DNE Enablers: Synthesising Literature and Cases

We used TCM-ADO analysis and findings from the platform cases to extract key enablers for DNEs through a structured coding and processing technique. We correlated and contrasted the learnings from literature review and case studies to arrive at the list of key enablers for further validation and ranking.

Step 1: Open coding of all antecedents, decisions, outcomes, mediating and moderating variables across 55 papers and then correlated and added findings and themes from the case studies. For example, we extracted short phrases directly from the research variables and platform cases (e.g., “data accuracy,” “user experience”, “data privacy”, “model update,” “multihoming policies,” “trust,” “leadership support”).

Step 2: Axial clustering of similar codes - Related signals were grouped into conceptual categories. For example: “Data accuracy,” “privacy,” and “governance” → Data stewardship and quality; “Adaptive models,” “personalisation,” “explainability” → Algorithmic capability

Step 3: Saturation and boundary testing - Each cluster had to appear in multiple papers and case studies, relate to more than one stage of the DNE cycle, and be actionable or observable.

Ten clusters clearly met these criteria and appeared consistently across theories, contexts, methods, antecedents, decisions, and outcomes Table 3.

| Table 3 Final Enablers with Direct Literature and Case Grounding | ||

| Enabler | How it appears in literature | Supporting studies |

| Data stewardship and quality | Data accuracy, transparency, governance, bias mitigation | Gregory et al. (2021); Yu et al. (2021); Hammond-Errey (2024); Iansiti (2021); Song et al. (2021) |

| Algorithmic capability | Learning depth, personalisation, update cadence, explainability | Hagiu & Wright (2023); Wang et al. (2024); Jiang et al. (2025); Fang & Jiang (2024); Malgonde (2025) |

| Platform Governance and complementor strategy | Data-sharing rules, incentives, multihoming control | Zeng et al. (2019); Wallbach et al. (2019); Ens et al. (2023); Knorr et al. (2025); Varga et al. (2023) |

| Feedback tightness and latency | Speed of data-to-model-to-user feedback loops | Malgonde (2025); Loebbecke et al. (2024); Kandaurova & Skog (2024); Wang et al. (2024) |

| User trust and overload | Privacy, cognitive load, transparency, perceived risk | Qiu et al. (2025); Yeon et al. (2025); Fang & Jiang (2024); Song et al. (2024); Li et al. (2025) |

| Leadership orientation | Strategic alignment, AI literacy, resource commitment | Pinski et al. (2024); Climent et al. (2024); Hammond-Errey (2024); Ens et al. (2023) |

| Platform Architecture & Infrastructure | Interoperability, boundary resources, and openness controls. APIs, standards, and governance that enable third‑party complements and internalize positive externalities accelerate data generation. | Haftor et al. (2021); Hacochen (2023) |

| User Centric Design | User interface, User experience, Frictionless Experience, Data to user experience enhancement. | Gregory et al. (2021); Haftor et al. (2021); Ashuri (2024) |

| Continuous Data Collection | Data variety, data volume, continuous data, real time data | Hagiu & Wright (2023); Gu et al. (2022) |

| Proprietary Access & Data Ownership | Data ownership, data access, proprietary data | Gregory et al. (2022); Niculescu et al. (2018) |

These ten enablers are not theoretical assumptions but empirically grounded constructs repeatedly observed across the 55 research papers and 5 practical case studies. We therefore used them as the starting point for the Delphi process to consolidate terminology and validate relevance, followed by AHP to prioritise their relative importance. Section 5 details this consolidation and ranking process.

How this SLR feeds Delphi → AHP

The SLR yields a seed list of enablers that recur across high-quality studies:

Data stewardship/quality, feedback tightness/latency, algorithmic capability, governance and complementor strategy, user trust/overload, and leadership orientation (Hagiu & Wright, 2023; Knorr et al., 2025; Pinski et al., 2024; Qiu et al., 2025; Malgonde, 2025; Loebbecke et al., 2024; Vomberg et al., 2023; Gala et al., 2024). These become the Delphi items that are rated, refined and justified with traceable literature pointers; AHP then provides normalized weights and a consistency ratio for a transparent ranking that directly reflects the literature’s priorities.

Mixed Method MCDM Analysis (Delphi → AHP): Enabler Validation and Prioritisation

This section presents the process of validating and prioritising the core enablers of Data Network Effects (DNEs) identified through the expanded systematic review and case analyses. The aim was to consolidate the fragmented constructs reported across 55 high-quality research and case studies into a validated framework of enablers that influence the strength and sustainability of DNEs in AI-driven platform ecosystems. The process followed a Desk/Documentary Delphi–AHP sequence, which is well established in conceptual and early-stage technology management research (Niederberger & Spranger, 2020).

Methodological Approach

The Delphi–AHP sequence was conducted in two stages. The Delphi technique was used to reach a validated list of enablers of DNEs through reliable consensus of experts via questionnaires and controlled feedback. The panel consisting of 22 professionals included Assistant Professors in information systems, AI platform startup founders, Business heads of platform companies, Data Engineers and Data Architects. In Stage 1, the enablers derived from the systematic literature review were assessed by experts using a structured rubric (see Appendix A). Each item was rated on two parameters importance and clarity/observability on a seven-point scale. Items with Median (Importance) ≥ 6 and Interquartile Range (IQR) ≤ 1 were retained; those with borderline clarity were reworded and re-evaluated in a second round. The procedure for the Delphi technique was adopted from Ishikawa et al. (1993).

Agreeing with the nascent and conceptually heterogeneous nature of DNE scholarship, a Desk Delphi methodology was used instead of a more traditional respondent-based Delphi. The method has been credited with uncovering consensus based on the empirically and theoretically documented evidence in situations when the constructs are still in their formative phases. The process had methodological transparency because of the use of experts, systematic recording of all the rating rounds and the calculation of agreement coefficients, such as Spearman r and percent agreement. The final values for all the enablers are compared with the desired threshold value. The threshold value was used to agree or reject an enabler and was calculated as the average of all enabler values gathered from experts’ ratings (Mangla et al., 2018).

In Stage 2, using the Analytic Hierarchy Process (AHP), the shortlisted enablers were compared through the pairwise method using the Saaty (2008) 19 scale to determine weighted priorities (Kumar et al., 2020). The obtained pairwise matrices were then summed using the geometric-mean method to acquire group consensus and the similarity of judgments was also evaluated using the Consistency Ratio (CR) which was less than the criteria of 0.10 (Dong et al., 2010). The resultant normalized weights outline the relative importance of each of the enablers in enhancing DNEs.

Delphi Consensus Results

Table 4A shows a summary of the final results of the Desk Delphi phase. Six of the ten enablers were validated, depending on the convergence of the importance and clarity ratings. The expert concordance of median and inter-quartile range (IQR) measures is significantly high, which supports the idea that these measures continue to be used in high quality DNE research and can be observed in platform ecosystems Table 4.

| Table 4 Delphi Consensus Results | ||||||

| Enabler | Representative Indicators | Median (Imp) | IQR (Imp) | Median (Clarity) | IQR (Clarity) | Evidence Pointers |

| Data stewardship and quality | Accuracy, governance, bias mitigation, and transparency in data collection and usage | 6.5 | 0.5 | 6 | 0.5 | Gregory et al. (2021); Zhu et al. (2025); Hammond-Errey (2024) |

| Feedback tightness and latency | Time lag between data capture, model update, and visible user feedback | 6 | 1 | 6 | 1 | Malgonde (2025); Loebbecke et al. (2024) |

| Algorithmic capability | AI/ML models’ learning depth, adaptability, and ability to deliver personalised value | 6 | 1 | 6 | 0.5 | Hagiu & Wright (2023); Wang et al. (2024); Jiang et al. (2025) |

| Governance and complementor strategy | Platform policies for multihoming, data sharing, and complementor incentives | 6 | 1 | 5.5 | 1 | Knorr et al. (2025); Wallbach et al. (2019); Ens et al. (2023) |

| User trust and overload | User confidence in data usage balanced against cognitive load and perceived intrusion | 6 | 1 | 5.5 | 1 | Qiu et al. (2025); Yeon et al. (2025); Fang & Jiang (2024) |

| Leadership orientation | Strategic awareness, AI literacy, and top management commitment to data governance | 6 | 1 | 6 | 1 | Pinski et al. (2024); Climent et al. (2024) |

The inter-rater reliability was also robust based on a rank correlation coefficient of Spearman of 0.83. The observation confirms the consistency of experts and converging judgement of judgement across the selected items.

AHP‑Based Prioritisation

Based on the findings of the Delphi round, the six retained enablers were then put through AHP framework to measure their relative implications on DNEs. Pairwise comparisons of all the pairs of enablers were done based on their conceptual relevance as well as the empirical weight they have in the literature (see Appendix B). The aggregate priority vector and a total consistency ratio of 0.06 testifies to a consistent and coherent bunch of judgements Table 5, Figure 6.

| Table 5 Group-Aggregated Weights Derived Through Geometric Mean Method (Sum = 1.00) | |

| Enabler | Weight |

| Data stewardship and quality | 0.238 |

| Algorithmic capability | 0.212 |

| Platform Governance and complementor strategy | 0.185 |

| Feedback tightness and latency | 0.164 |

| User trust and overload | 0.121 |

| Leadership orientation | 0.080 |

Analysis shows that data stewardship and data quality became the most important factors that determine strong DNEs with a close following of algorithmic capability and governance structures. These results support recent results, which suggest that an effective data governance, adaptive algorithmic design, and a properly tuned platform governance largely enforce the self-reinforcing feedback loop that constitutes the core of DNEs.

The comparatively less, but crucial, values of the user trust, leadership orientation suggest that they are moderating factors, which determine the effectiveness of technical and governance facilitators to the long-term user engagement and platform expansion.

Discussion

The key research questions for this study were as follows: RQ1: What is clear and comprehensive definition of DNEs for future research? RQ2: What are the key enablers of triggering DNEs in AI platforms? RQ3: Which are the focus areas for practitioners to realize DNEs in the digital platforms to achieve sustainable competitive advantage? RQ4: What are emerging research directions to guide the future scope of study in the field DNEs?

In order to answer RQ1, we conducted a systematic review of 55 quality research papers on DNEs and analysed 5 well known AI platforms, synthesising the current state of knowledge on Data Network Effects. Furthermore, our deep analyses of 55 papers and 5 platform companies provided a coherent and validate definition of DNEs which has been missing in the literature.

To answer RQ2 and RQ3, we coded our research papers and cases to arrive at 10 enablers of DNEs, which were further validated through Delphi technique to arrive at 6 validated enablers of DNEs. We further adopted AHP analysis for ranking the enablers, that has provided a logical order of enablers of DNEs for practitioners to adopt for development and growth within AI-centric digital platforms. The results reveal that two categories of enablers are the most common in perpetuating and improving DNEs, namely, the data stewardship and quality and algorithmic capability. Intuitively, from our case analyses as well, the most crucial factors to trigger DNEs are the data volume, quality, and AI algorithms used to derive insights and enhance user experience. This validated result is consistent with the prior research, which emphasizes that it is the reliably well-controlled data pipelines and adaptive learning model that give rise to the positive feedback elf-reinforcing loop in platform ecosystems (Gregory et al., 2021; Haftor et al., 2023).

The quality of the data utilized will determine the quality of the insights and how an algorithm can transform the insights into value as perceived by the end-users. The two mechanisms working together will result in more people being attracted to the platform (Shin et al., 2022), in turn generating heterogenous data and improved learning cycles, which kicks off DNEs. The third enabler that mattered the most was platform governance and complementor strategy which concluded that platform legitimation and governance is one of the important factors for users to trust platform owners with their data (Gorwa, 2019), triggering larger and quality data gathering for triggering data network effects.

The ranking would give clear priorities in terms of businesses and their management, in making formed decisions about implementing AI in digital platforms. As per our findings, the platform owners should invest in the improvement of data collection, processing, quality management, and governance systems, algorithmic quality, enhancement, transparency, and the development of internal literacy on AI systems management. The quality of the data management and AI implementation shall be accompanied by the real time improvement of the product and its features, enhancing user experience, triggering addition of more users and thus data network effects and business outcomes for the platform owners. The correspondence of the leadership-orientation with the structure of governance is significant because the leadership has an impact on the ethical data practices, and the long-term learning infrastructure. By creating managerial capabilities to decode the outcomes of an algorithm, companies will be in a better position to retain the trust of users and deter cognitive overload in the minds of the end-users. The findings also indicate the significance of the Platform Theory, and the Resource-Based View (RBV). Machine intelligence, information curatorship, real time improvement of the product leading to enhanced customer experiences can be viewed as a rare, difficult to imitate asset that entails a competitive advantage that is sustained. On the other hand, governance and user-centric trust mechanism, is a collection of coordinating capabilities that assists in aligning the external stakeholders to the strategic direction of the platform. In addition, the self-affirming motive of DNEs extend the Network Effects Theory which establishes that value growth is not only achieved because of user volume, but also owing to learning and platform enhancement through data and AI. The combination of Desk/Documentary Delphi and AHP methodologically enhances the strength of an area where the empirical investigations are not very abundant. It links conceptual synthesis and analytical validation, which enable the prioritisation of enablers in a structured form, according to consensus across the literature. Such a mixed-method format offers a clear avenue on which other upcoming researchers can replicate and perfect the hierarchy of enablers as the field advances. It strengthens the usage of literature reviews and intelligence that can be drawn from existing research to further advance a theory as well as bearings on the business world.

Finally, our systematic review and cross-case analysis, while synthesising the current state of knowledge on Data Network Effects, also illuminates several critical gaps and unanswered questions. To guide the scholarly conversation forward, we structure the most promising avenues for future inquiry into five key thematic areas Table 6.

| Table 6 Research Gaps and Future Research Questions | |

| Research Gap | Potential Research Questions |

| Algorithmic Design and Comparative Analysis | What is the relative effectiveness and implications for platform competition and DNEs due to various algorithms and algorithmic design choices? What are the adoption costs of sophisticated AI/ML algorithms and their irrational behaviour? |

| Data Network Effects and Value Creation | How can platforms balance between value creation and capture? How can companies make the transition from data control to data sharing? What is the impact of data network effects on competitive advantage? How can value be created for end users through DNE? How does data as an asset have different dynamics than the installed base of users as an asset? How do we distinguish network effects from data-driven learning? What is the impact of cross-user and within-user learning on DNEs? |

| Platform Dynamics and Ecosystem Development | What are the challenges, such as the "chicken and egg" problem, in terms of subsidisation required for each side of the platform to create a positive development cycle? What insights can multiple AI platforms offer into the emergence and operation of data network effects (DNEs) and the dynamics of decentralised platform ecosystems? |

| Technological Architecture and Algorithmic Interactions with Users | How do the dyadic and triadic relationships between data, technology design, and algorithms impact platform interactions? How do regulatory challenges and developing new algorithms and their interplay with the platforms' technical architecture (modularity and openness) enhance platforms? How can platforms overcome algorithm aversion? How can the collaboration between AI and employees impact performance? |

| Ethical Considerations and Algorithmic Bias | How can potential algorithmic biases lead to unfair practices, especially with the automated generative capabilities of GenAI? How to ensure the responsible deployment of AI technologies? |

Contribution, Limitations, and Conclusion

This study set out to bring structure and clarity to the nascent, yet critically important, academic conversation on Data Network Effects. By systematically reviewing the foundational literature, illustrating the key enablers through literature and mini-case analyses, and validating and prioritising the key enablers through mixed-mcdm techniques, this paper makes several important contributions.

Contribution